Sibhekene nezinkinga zokuthuthukisa ezimweni eziningi zomhlaba wangempela lapho sidinga ukukhomba ubuncane noma ubukhulu bomsebenzi.

Cabangela umsebenzi njengokumelela kwezibalo kwesistimu, futhi ukunquma ubuncane bawo noma ubukhulu bawo kungaba semqoka ezinhlotsheni zokusebenza ezifana nokufunda komshini, ubunjiniyela, ezezimali, nokunye.

Cabanga ngendawo enamagquma nezigodi, futhi umgomo wethu uwukuthola indawo ephansi (incane) ukuze sifike lapho siya khona ngokushesha okukhulu.

Sivamise ukusebenzisa ama-algorithms e-gradient descent ukuze sixazulule izinselele ezinjalo zokuthuthukisa. Lawa ma-algorithms ayindlela yokuthuthukisa ephindaphindayo yokunciphisa umsebenzi ngokuthatha izinyathelo eziya endaweni yokwehla kakhulu (i-gradient engalungile).

I-gradient ikhombisa isiqondiso ngokukhuphuka okukhulu komsebenzi, futhi ukuhamba kwelinye icala kusiholela kokuncane.

Iyini ngempela i-Gradient Descent Algorithm?

Ukwehla kwe-Gradient kuyindlela ethandwayo yokuthuthukisa ephindaphindwayo yokunquma ubuncane (noma ubuningi) bomsebenzi.

Kuyithuluzi elibalulekile emikhakheni eminingana, kuhlanganisa ukufunda imishini, ukufunda okujulile, ubuhlakani bokwenziwa, ubunjiniyela, kanye nezezimali.

Isimiso esiyisisekelo se-algorithm sisekelwe ekusebenziseni kwayo i-gradient, ebonisa isiqondiso sokukhuphuka okubukhali kunani lomsebenzi.

I-algorithm izulazula kahle ukwakheka kwezwe komsebenzi iye kokuncane ngokuthatha izinyathelo ngokuphindaphindiwe kwelinye icala njengegradient, icwenge ngokuphindaphindiwe isisombululo kuze kuhlangane.

Kungani Sisebenzisa I-Gradient Descent Algorithms?

Okokuqala, zingasetshenziswa ukuxazulula izinkinga ezihlukahlukene zokusebenzisa ngokugcwele, kuhlanganise nalezo ezinezikhala ezinobukhulu obuphezulu nemisebenzi eyinkimbinkimbi.

Okwesibili, bangathola izixazululo ezifanele ngokushesha, ikakhulukazi uma isisombululo sokuhlaziya singatholakali noma sibiza ngokwezibalo.

Izindlela zokwehliswa kwe-gradient ziyakala kakhulu futhi zingaphatha ngempumelelo amadathasethi amakhulu.

Ngenxa yalokho, zisetshenziswa kabanzi ku umshini wokufunda ama-algorithms njengokuqeqesha amanethiwekhi e-neural ukuze afunde kudatha futhi aguqule imingcele yawo ukuze kuncishiswe amaphutha okuqagela.

Isibonelo Esiningiliziwe Sezinyathelo Zokwehliswa Kwe-Gradient

Ake sibheke isibonelo esinemininingwane eyengeziwe ukuze siqonde kangcono indlela yokwehla kwe-gradient.

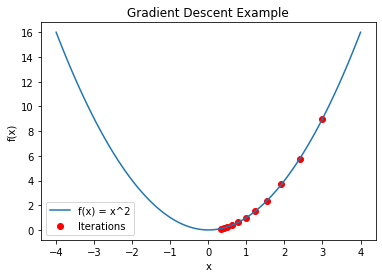

Cabangela umsebenzi we-2D u-f(x) = x2, okhiqiza ijika le-parabolic eliyisisekelo nelincane ku-(0,0). I-algorithm yokwehla kwe-gradient izosetshenziselwa ukunquma leli phuzu elincane.

Isinyathelo 1: Ukuqalisa

I-algorithm yokwehla kwegradient iqala ngokuqalisa inani le-variable x, emelwe njengokuthi x0.

Inani lokuqala lingaba nomthelela omkhulu ekusebenzeni kwe-algorithm.

Ukuqalisa okungahleliwe noma ukusebenzisa ulwazi lwangaphambili lwenkinga izindlela ezimbili ezivamile. Cabanga ukuthi x₀ = 3 ekuqaleni kwecala lethu.

Isinyathelo sesi-2: Bala i-Gradient

Igradient yomsebenzi u-f(x) endaweni yamanje x₀. kufanele-ke ibalwe.

Igradient ikhombisa ukuthambeka noma izinga loshintsho lomsebenzi kuleso simo esithile.

Sibala okuphuma kokunye okuphathelene no-x komsebenzi u-f(x) = x2, ohlinzeka ngo-f'(x) = 2x. Sithola igrediyenti kokuthi x0 njengo-2 * 3 = 6 ngokufaka u-x₀ = 3 ekubalweni kwegradient.

Isinyathelo sesi-3: Buyekeza Amapharamitha

Sisebenzisa ulwazi lwegradient, sibuyekeza inani lika-x kanje: x = x₀ – α * f'(x₀), lapho u-α (alpha) esho izinga lokufunda.

Izinga lokufunda liyi-hyperparameter enquma usayizi wesinyathelo ngasinye kunqubo yokubuyekeza. Ukubeka izinga lokufunda elifanele kubalulekile ngoba izinga lokufunda elihamba kancane lingabangela algorithm ukuthatha ukuphindaphinda okuningi ukuze ufinyelele ubuncane.

Izinga lokufunda eliphezulu, ngakolunye uhlangothi, lingaholela ekugxumeni kwe-algorithm noma yehluleke ukuhlangana. Ake sicabangele izinga lokufunda lika-α = 0.1 ngenxa yalesi sibonelo.

Isinyathelo sesi-4: Phinda futhi

Ngemva kokuba sinenani elibuyekeziwe lika-x, siphinda Izinyathelo 2 no-3 ngenani elinqunywe kusengaphambili lokuphindaphinda noma kuze kube yilapho ukuguqulwa kokuthi x kuba kuncane, okubonisa ukuhlangana.

Indlela ibala ukuthambekela, ibuyekeza inani lika-x, futhi iqhubekisela phambili inqubo ekuphindaphindweni ngakunye, ukuyivumela ukuthi isondele kokuncane.

Isinyathelo sesi-5: Ukuhlangana

Uhlelo luyahlangana ngemva kokuphindaphinda okumbalwa kuze kufike endaweni lapho izibuyekezo ezengeziwe zingalithinti kakhulu inani lomsebenzi.

Esimweni sethu, njengoba ukuphindaphinda kuqhubeka, u-x uzosondela ku-0, okuyinani elincane lika-f(x) = x^2. Inombolo yokuphindaphinda okudingekayo ekuhlanganeni inqunywa izici ezifana nezinga lokufunda elikhethiwe kanye nobunkimbinkimbi bomsebenzi olungiswayo.

Ukukhetha izinga lokufunda ()

Ukukhetha izinga lokufunda elamukelekayo () kubalulekile ekusebenzeni kahle kwe-algorithm yokwehla kwe-gradient. Njengoba kushiwo ngaphambili, izinga lokufunda eliphansi lingabangela ukuhlangana kancane, kanti izinga lokufunda eliphezulu lingabangela ukudubuleka ngokweqile nokwehluleka ukuhlangana.

Ukuthola ibhalansi efanele kubalulekile ukuze kuqinisekiswe ukuthi i-algorithm ihlangana ibe ubuncane obuhlosiwe ngendlela ephumelelayo ngangokunokwenzeka.

Ukushuna izinga lokufunda kuvame ukuba yinqubo yesilingo nephutha ekusebenzeni. Abacwaningi nodokotela bavivinya ngokujwayelekile amazinga okufunda ahlukene ukuze babone ukuthi akuthinta kanjani ukuhlangana kwe-algorithm enseleleni yabo ethile.

Ukuphatha Imisebenzi Engeyona I-Convex

Nakuba isibonelo esandulele sinomsebenzi olula we-convex, izinkinga eziningi zokuthuthukisa umhlaba wangempela zifaka imisebenzi engeyona i-convex ene-minima eminingi yasendaweni.

Uma kusetshenziswa ukwehla kwe-gradient ezimweni ezinjalo, indlela ingahlangana ibe ubuncane bendawo kunobuncane bomhlaba jikelele.

Izinhlobo ezimbalwa ezithuthukisiwe zokwehla kwe-gradient zenzelwe ukunqoba le nkinga. I-Stochastic Gradient Descent (SGD) iyindlela eyodwa enjalo eyethula ukungahleliwe ngokukhetha isethi engaphansi engahleliwe yamaphoyinti edatha (eyaziwa ngokuthi i-mini-batch) ukuze kubalwe ukuphindaphinda ngakunye.

Lokhu kusampula okungahleliwe kuvumela i-algorithm ukuthi igweme ubuncane bendawo futhi ihlole izingxenye ezintsha zendawo yomsebenzi, okuthuthukisa amathuba okuthola ubuncane obungcono.

Adam (Adaptive Moment Estimation) okunye okuhlukile okuvelele, okuyindlela yokuthuthukisa izinga lokufunda eguquguqukayo ehlanganisa izinzuzo zakho kokubili i-RMSprop kanye nomfutho.

U-Adam ulungisa izinga lokufunda lepharamitha ngayinye ngokuguquguqukayo ngokusekelwe olwazini lwegradient yangaphambilini, okungase kuphumele ekuhlanganeni okungcono emisebenzini engeyona i-convex.

Lokhu kuhlukahluka okuyinkimbinkimbi kokwehla kwe-gradient kufakazele ukuthi kusebenza ngempumelelo ekuphatheni imisebenzi eyandayo eyinkimbinkimbi futhi sekuphenduke amathuluzi ajwayelekile ekufundeni komshini nokufunda okujulile, lapho izinkinga zokuthuthukisa okungeyona i-convex zivamile.

Isinyathelo sesi-6: Bona ngeso lengqondo Intuthuko Yakho

Ake sibone ukuqhubeka kwe-algorithm yokwehla kwe-gradient ukuze siqonde kangcono inqubo yayo yokuphindaphinda. Cabangela igrafu ene-eksisi engu-x emelela ukuphindwaphindwa kanye ne-eksisi ka-y emele inani lomsebenzi u-f(x).

Njengoba i-algorithm iphindaphinda, inani lika-x lisondela ku-zero futhi, ngenxa yalokho, inani lomsebenzi liyehla ngesinyathelo ngasinye. Uma kuhlelwa kugrafu, lokhu kuzobonisa ithrendi eyehlayo ehlukile, ebonisa ukuqhubeka kwe-algorithm yokufinyelela kokuncane.

Isinyathelo sesi-7: Ukulungisa Kahle Izinga Lokufunda

Izinga lokufunda () liyisici esibalulekile ekusebenzeni kwe-algorithm. Empeleni, ukunquma izinga lokufunda elifanele kuvame ukudinga ukuzama namaphutha.

Amanye amasu okuthuthukisa, njengamashejuli wezinga lokufunda, angashintsha izinga lokufunda ngesikhathi sokuqeqeshwa, aqale ngevelu ephezulu futhi alehlise kancane kancane njengoba i-algorithm isondela ekuhlanganeni.

Le ndlela isiza ukulinganisa phakathi kwentuthuko esheshayo ekuqaleni kanye nokuzinza eduze nokuphela kwenqubo yokwenza kahle.

Esinye Isibonelo: Ukunciphisa Umsebenzi We-Quadratic

Ake sibheke esinye isibonelo ukuze siqonde kangcono ukwehla kwe-gradient.

Cabangela umsebenzi we-quadratic onezinhlangothi ezimbili g(x) = (x – 5)^2. Ku-x = 5, lo msebenzi ngokufanayo unobuncane obuncane. Ukuthola lobu buncane, sizosebenzisa ukwehla kwe-gradient.

1. Ukuqalisa: Ake siqale ngo-x0 = 8 njengendawo yethu yokuqala.

2. Bala igradient ye-g(x): g'(x) = 2(x – 5). Uma sishintshanisa u-x0 = 8, igradient kokuthi x0 ingu-2 * (8 – 5) = 6.

3. Ngo-= 0.2 njengezinga lethu lokufunda, sibuyekeza u-x kanje: x = x₀ – α * g'(x₀) = 8 – 0.2 * 6 = 6.8.

4. Phinda: Siphinda izinyathelo 2 no-3 izikhathi eziningi ezidingekayo kuze kufinyelelwe ekuhlanganeni. Umjikelezo ngamunye usondeza u-x ku-5, inani elincane lika-g(x) = (x – 5)2.

5. Ukuhlangana: Indlela ekugcineni izohlangana ibe ngu-x = 5, okuyinani elincane lika-g(x) = (x – 5)2.

Ukuqhathanisa Amazinga Okufunda

Ake siqhathanise isivinini sokuhlangana sokwehla kwegradient kumazinga ahlukene okufunda, sithi α = 0.1, α = 0.2, kanye no-α = 0.5 esibonelweni sethu esisha. Siyabona ukuthi izinga lokufunda eliphansi (isb, = 0.1) lizoholela ekuhlanganeni okude kodwa kube nenani eliphansi elinembe kakhudlwana.

Izinga lokufunda eliphakeme (isb, = 0.5) lizohlangana ngokushesha kodwa lingadubula noma linyakaze mayelana nenani eliphansi, okuholela ekunembeni okuncane.

Isibonelo se-Multimodal Sokuphatha Umsebenzi Ongeyona I-Convex

Cabangela h(x) = sin(x) + 0.5x, umsebenzi ongelona i-convex.

Kukhona okuncane okumbalwa kwendawo kanye ne-maxima yalo msebenzi. Ngokuya ngendawo yokuqala nezinga lokufunda, singahlangana kunoma iyiphi i-minima yasendaweni sisebenzisa ukwehla kwe-gradient okujwayelekile.

Singakuxazulula lokhu ngokusebenzisa amasu okuthuthukisa athuthuke kakhulu afana no-Adam noma i-stochastic gradient descent (SGD). Lezi zindlela zisebenzisa izilinganiso zokufunda eziguquguqukayo noma amasampula angahleliwe ukuhlola izifunda ezihlukene zokwakheka kwezwe komsebenzi, okwandisa amathuba okuthola ubuncane obungcono.

Isiphetho

Ama-algorithms okwehla kwe-gradient angamathuluzi okuthuthukisa anamandla asetshenziswa kabanzi ezinhlobonhlobo zezimboni. Bathola okuphansi kakhulu (noma okuphezulu) komsebenzi ngokubuyekeza ngokuphindaphindiwe amapharamitha ngokususelwe ekuqondeni kwegradient.

Ngenxa yemvelo ephindaphindayo ye-algorithm, ingakwazi ukuphatha izikhala ezinobukhulu obuphezulu kanye nemisebenzi eyinkimbinkimbi, iyenze ibaluleke kakhulu ekufundeni komshini nasekucutshungulweni kwedatha.

Ukwehla kwe-gradient kungabhekana kalula nobunzima bomhlaba wangempela futhi kube nomthelela omkhulu ekukhuleni kobuchwepheshe kanye nokwenziwa kwezinqumo okuqhutshwa idatha ngokukhetha ngokucophelela izinga lokufunda nokusebenzisa ukuhluka okuthuthukisiwe okunjengokwehla kwe-stochastic gradient kanye no-Adamu.

shiya impendulo