I-Natural Language Processing (NLP) ibona igagasi elisha lentuthuko. Futhi, amasethi edatha e-Hugging Face ahamba phambili kulo mkhuba. Kulesi sihloko, sizobheka ukubaluleka kwamasethi edatha e-Hugging Face.

Futhi, sizobona ukuthi zingasetshenziswa kanjani ukuqeqesha nokuhlola amamodeli e-NLP.

I-Hugging Face yinkampani ehlinzeka onjiniyela ngamadathasethi ahlukahlukene.

Kungakhathaliseki ukuthi ungumuntu osaqalayo noma uchwepheshe we-NLP onolwazi, idatha ehlinzekwe ku-Hugging Face izoba usizo kuwe. Hlanganyela nathi njengoba sihlola inkambu ye-NLP futhi sifunda mayelana namandla edathasethi yobuso beHugging.

Okokuqala, Iyini i-NLP?

I-Natural Language Processing (NLP) igatsha le ukuhlakanipha okungekhona okwangempela. Ifunda ukuthi amakhompyutha asebenzisana kanjani nezilimi zomuntu (zemvelo). I-NLP ihlanganisa ukudala amamodeli akwazi ukuqonda nokuhumusha ulimi lwabantu. Ngakho-ke, ama-algorithms angenza imisebenzi efana nokuhumusha ulimi, ukuhlaziywa kwemizwa, kanye nokukhiqizwa kombhalo.

I-NLP isetshenziswa ezindaweni ezahlukahlukene, kufaka phakathi isevisi yamakhasimende, ukumaketha, nokunakekelwa kwezempilo. Inhloso ye-NLP ukuvumela amakhompyutha ukuthi ahumushe futhi aqonde ulimi lwabantu njengoba lubhalwa noma lukhulunywa ngendlela esondelene neyabantu.

Sibutsetelo Ubuso Obumbambayo

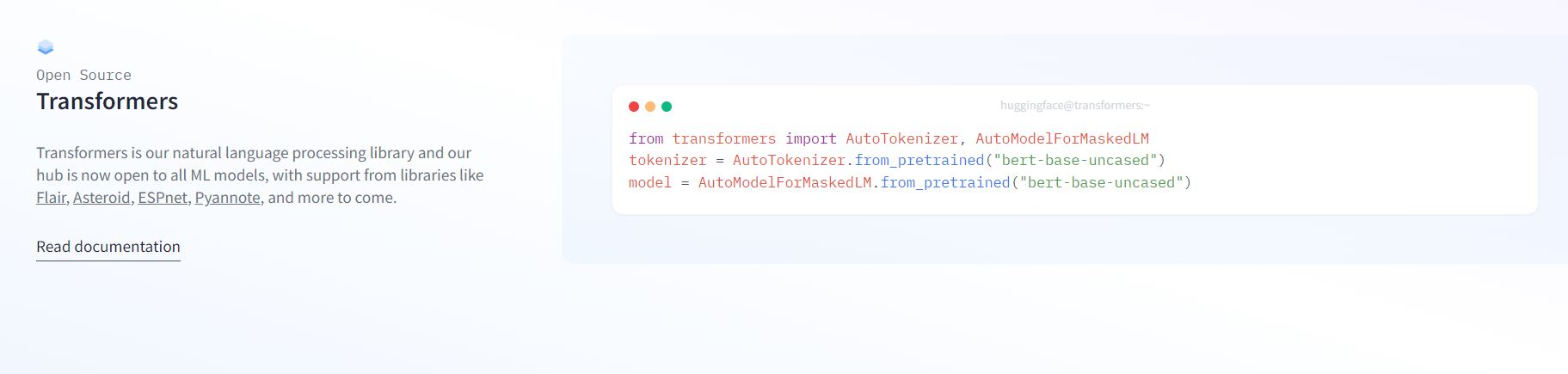

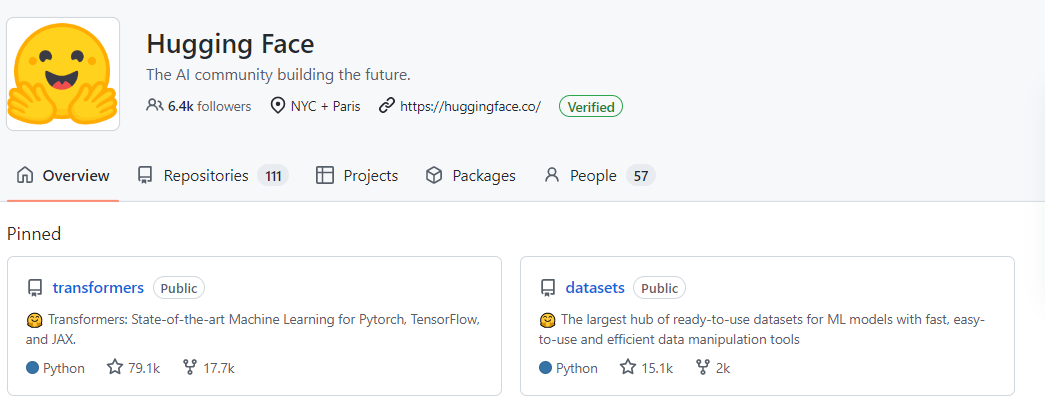

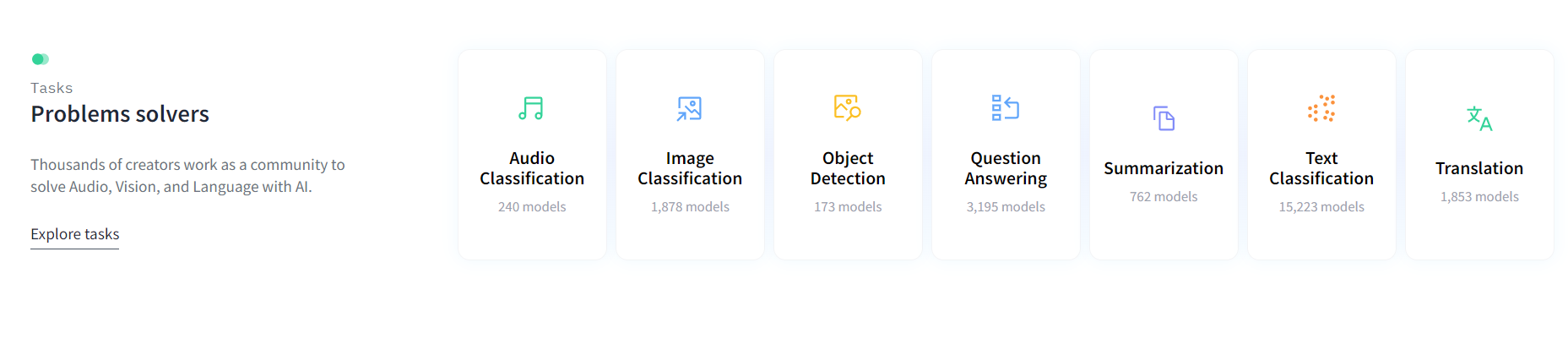

Ubuso Obumbambayo iyinhlangano yokucubungula ulimi lwemvelo (NLP) kanye nebhizinisi lobuchwepheshe bokufunda ngomshini. Bahlinzeka ngezinsiza eziningi ukusiza abathuthukisi ekuqhubekiseni phambili indawo ye-NLP. Umkhiqizo wabo ophawuleka kakhulu umtapo wezincwadi weTransformers.

Yakhelwe izinhlelo zokusebenza zokucubungula ulimi lwemvelo. Futhi, ihlinzeka ngamamodeli aqeqeshwe ngaphambilini emisebenzi eyahlukene ye-NLP njengokuhumusha ulimi nokuphendula imibuzo.

Ubuso Obugagu, ngaphezu kwelabhulali ye-Transformers, inikeza inkundla yokwabelana ngamasethi edatha okufunda ngomshini. Lokhu kwenza kube nokwenzeka ukufinyelela ngokushesha izinga eliphezulu idathasethi yokuqeqeshwa amamodeli abo.

Umgomo weHugging Face ukwenza ukucubungula kolimi lwemvelo (NLP) kufinyeleleke kakhudlwana kubathuthukisi.

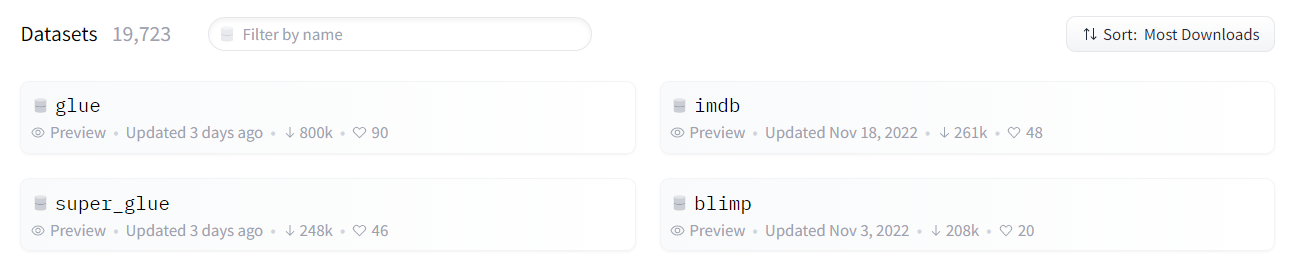

Amasethi edatha edatha yobuso obungangana obudume kakhulu

Cornell Movie-Dialogs Corpus

Lena idathasethi eyaziwa kakhulu evela ku-Hugging Face. I-Cornell Movie-Dialogs Corpus iqukethe izingxoxo ezithathwe kuma-movie-screenplays. Amamodeli okucubungula ulimi lwemvelo (NLP) angase aqeqeshwe kusetshenziswa leli nani elibanzi ledatha yombhalo.

Ngaphezulu kwezingxoxo ezingu-220,579 zengxoxo phakathi kwamapheya abalingiswa bama-movie angu-10,292 afakiwe eqoqweni.

Ungasebenzisa le dathasethi ukwenza imisebenzi ehlukahlukene ye-NLP. Isibonelo, ungathuthukisa ukwakhiwa kolimi kanye namaphrojekthi okuphendula imibuzo. Futhi, ungakha amasistimu ezingxoxo. ngoba izinkulumo zihlanganisa izihloko eziningi kangaka. Idathasethi nayo isetshenziswe kakhulu kumaphrojekthi ocwaningo.

Ngakho-ke, leli ithuluzi eliwusizo kakhulu kubacwaningi nabathuthukisi be-NLP.

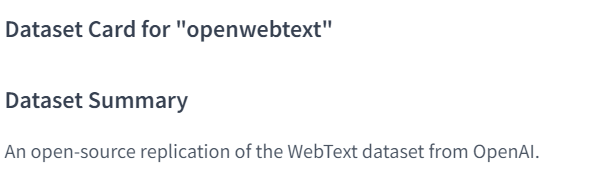

I-OpenWebText Corpus

I-OpenWebText Corpus iqoqo lamakhasi aku-inthanethi ongawathola kuplathifomu ye-Hugging Face. Le dathasethi ihlanganisa anhlobonhlobo amakhasi aku-inthanethi, njengezindatshana, amabhulogi, nezinkundla. Ngaphandle kwalokho, bonke laba bakhethwa ngenxa yezinga labo eliphezulu.

Idathasethi ibaluleke kakhulu ekuqeqesheni nasekuhloleni amamodeli e-NLP. Ngakho-ke, ungasebenzisa le dathasethi emisebenzini efana nokuhumusha, nokufingqa. Futhi, ungenza ukuhlaziya imizwa usebenzisa le dathasethi eyimpahla enkulu yezinhlelo zokusebenza eziningi.

Ithimba le-Hugging Face likhethe i-OpenWebText Corpus ukuze inikeze isampula yekhwalithi ephezulu yokuqeqeshwa. Kuyidathasethi enkulu enedatha yombhalo engaphezu kuka-570GB.

ISITOLO

I-BERT (Imibono Yesifaki khodi Se-Bidirectional evela ku-Transformers) iyimodeli ye-NLP. Iqeqeshwe kusengaphambili futhi ifinyeleleka endaweni yesikhulumi se-Hugging Face. I-BERT idalwe ithimba le-Google AI Language. Futhi, iqeqeshwa kudathasethi yombhalo ukuze ibambe umongo wamagama emshweni.

Ngenxa yokuthi i-BERT iyimodeli esekelwe ku-transformer, ingakwazi ukucubungula ukulandelana kokufakwayo okugcwele ngesikhathi esisodwa esikhundleni segama elilodwa ngesikhathi. Kusetshenziswa imodeli esekwe kwi-transformer izindlela zokunaka ukuhumusha okokufaka okulandelanayo.

Lesi sici senza i-BERT ibambe umongo wamagama emshweni.

Ungasebenzisa i-BERT ukuhlukanisa umbhalo, ukuqonda ulimi, igama lebhizinisi ukuhlonza, kanye nokulungiswa kwenkomba, phakathi kwezinye izinhlelo zokusebenza ze-NLP. Futhi, kunenzuzo ekukhiqizeni umbhalo nokuqonda ukufundwa komshini.

I-SQUAD

I-SQUAD (Idathasethi Yempendulo Yemibuzo yaseStanford) iyisizindalwazi semibuzo nezimpendulo. Ungayisebenzisa ukuqeqesha amamodeli okuqonda okufundwa komshini. Isethi yedatha ihlanganisa imibuzo nezimpendulo ezingaphezu kuka-100,000 ngezihloko ezihlukahlukene. I-SQuAD ihlukile kumadathasethi wangaphambilini.

Igxila emibuzweni edinga ulwazi lwengqikithi yombhalo kunokumane ifanise amagama angukhiye.

Ngenxa yalokho, kuyinsiza enhle kakhulu yokudala nokuhlola amamodeli wokuphendula imibuzo neminye imisebenzi yokuqonda umshini. Abantu babhala imibuzo nge-SQUAD futhi. Lokhu kunikeza izinga eliphezulu lekhwalithi nokuvumelana.

Sekukonke, i-SQuAD iyinsiza ebalulekile yabacwaningi nabathuthukisi be-NLP.

MNLI

I-MNLI, noma i-Multi-Genre Natural Language Inference, iyidathasethi esetshenziselwa ukuqeqesha nokuhlola amamodeli wokufunda wemishini ukuze kucatshangwe ngolimi lwemvelo. Injongo ye-MNLI ukukhomba ukuthi isitatimende esinikeziwe siyiqiniso, singamanga, noma singathathi hlangothi uma kuqhathaniswa nesinye isitatimende.

I-MNLI ihlukile kudathasethi yangaphambilini ngoba ihlanganisa imibhalo eminingi evela ezinhlotsheni eziningi. Lezi zinhlobo ziyahlukahluka kusuka ezinganekwaneni kuye kwezingcezu zezindaba, namaphepha kahulumeni. Ngenxa yalokhu kuhlukahluka, i-MNLI iyisampula emele kakhulu yombhalo womhlaba wangempela. Ngokusobala ingcono kunamanye amasethi edatha okucatshangwa ngolimi lwemvelo.

Ngamacala angaphezu kuka-400,000 kudathasethi, i-MNLI ihlinzeka ngenani elibalulekile lezibonelo zamamodeli okuqeqesha. Futhi iqukethe ukuphawula kwesampula ngayinye ukusiza amamodeli ekufundeni kwawo.

Imicabango Final

Okokugcina, amasethi edatha e-Hugging Face ayisisetshenziswa esiyigugu sabacwaningi nabathuthukisi be-NLP. I-Hugging Face ihlinzeka ngohlaka lokuthuthukiswa kwe-NLP ngokusebenzisa iqembu elihlukahlukene lamasethi edatha.

Sicabanga ukuthi idathasethi enkulu ye-Hugging Face yi-OpenWebText Corpus.

Le dathasethi yekhwalithi ephezulu iqukethe ngaphezu kuka-570GB wedatha yombhalo. Kuyinsiza eyigugu yokuqeqesha nokuhlola amamodeli e-NLP. Ungazama ukusebenzisa i-OpenWebText namanye kumaphrojekthi akho alandelayo.

shiya impendulo