Uthungelwano olukhulu lwe-neural oluqeqeshelwe ukuqondwa kolwimi kunye nesizukulwana lubonise iziphumo ezibalaseleyo kwimisebenzi eyahlukeneyo kwiminyaka yakutshanje. I-GPT-3 ibonakalise ukuba iimodeli zeelwimi ezinkulu (LLMs) zinokusetyenziselwa ukufunda okumbalwa kwaye zifumane iziphumo ezigqwesileyo ngaphandle kokufuna idatha ebanzi yomsebenzi othile okanye ukutshintsha imodeli yeeparamitha.

UGoogle, iSilicon Valley tech behemoth, yazise iPaLM, okanye iPathways Language Model, kwishishini letekhnoloji yehlabathi njengemodeli yesizukulwana esilandelayo solwimi lwe-AI. UGoogle udibanise entsha kukubhadla okungeyonyani Uyilo lwePaLM oluneenjongo ezicwangcisiweyo zokuphucula umgangatho wemodeli yolwimi lwe-AI.

Kule post, siza kuvavanya i-algorithm yePalm ngokweenkcukacha, kubandakanya iiparamitha ezisetyenziselwa ukuyiqeqesha, umba oyisombululayo, nokunye okuninzi.

Yintoni i I-algorithm kaGoogle ye-PALM?

Iindlela iModeli yoLwimi yintoni I-PALM imele i. Le yi-algorithm entsha ephuhliswe nguGoogle ukwenzela ukuqinisa i-Pathways AI architecture. Injongo ephambili yesi sakhiwo kukwenza imisebenzi eyahlukeneyo eyisigidi ngaxeshanye.

Oku kubandakanya yonke into ukusuka ekucaciseni idatha entsonkothileyo ukuya kwingqiqo ebambekayo. I-PaLM inamandla okugqithisa i-AI yangoku ye-art-of-the-art kunye nabantu kulwimi kunye nemisebenzi yokuqiqa.

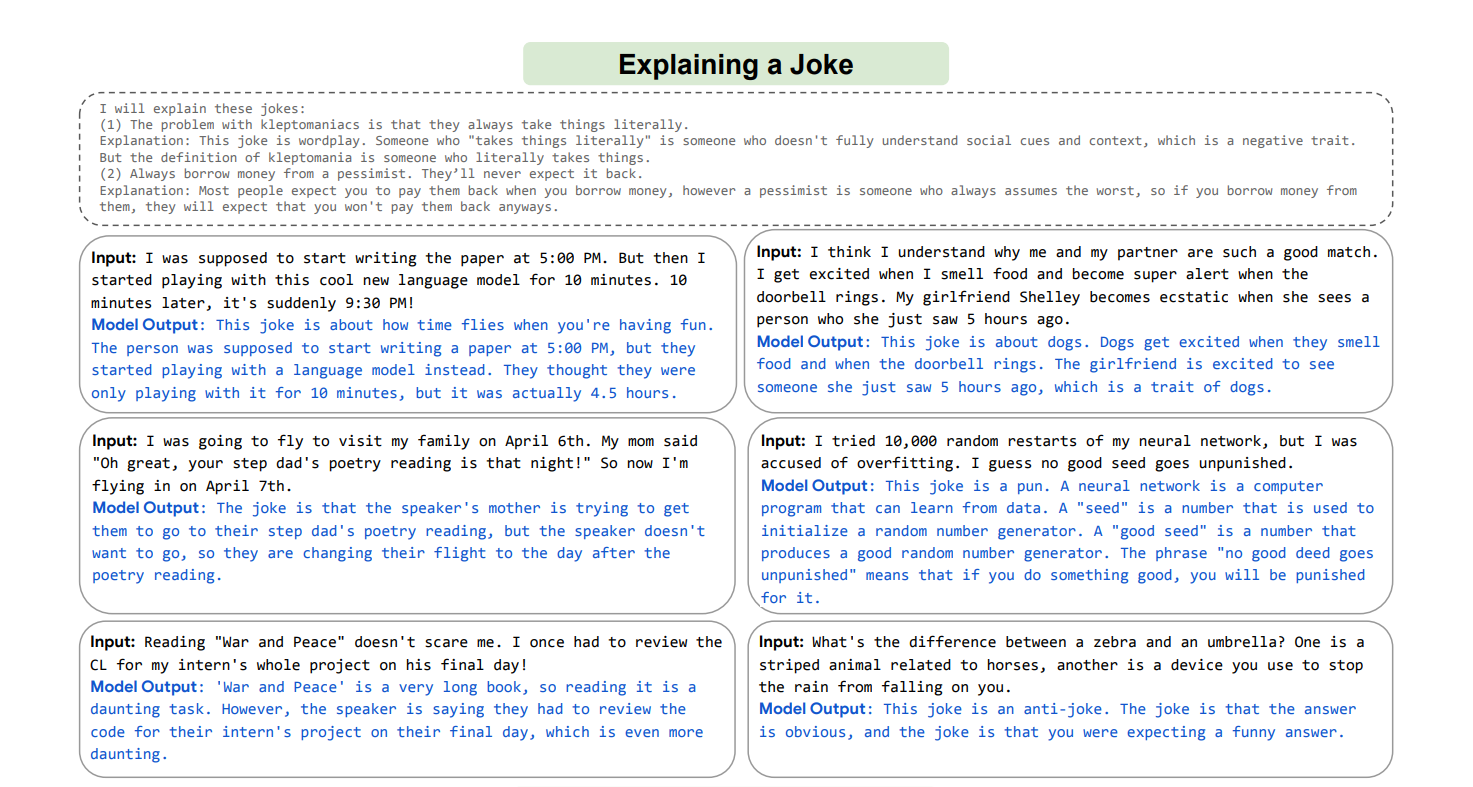

Oku kubandakanya ukuFumana okuNcinciweyo, okulingisa indlela abantu abafunda ngayo izinto ezintsha kunye nokudibanisa amasuntswana ahlukeneyo olwazi ukujongana nemingeni emitsha engazange ibonwe ngaphambili, ngenzuzo yomatshini onokusebenzisa lonke ulwazi lwawo ukusombulula imingeni emitsha; omnye umzekelo wobu buchule kwi-PALM kukukwazi ukucacisa isiqhulo esingazange sive ngaphambili.

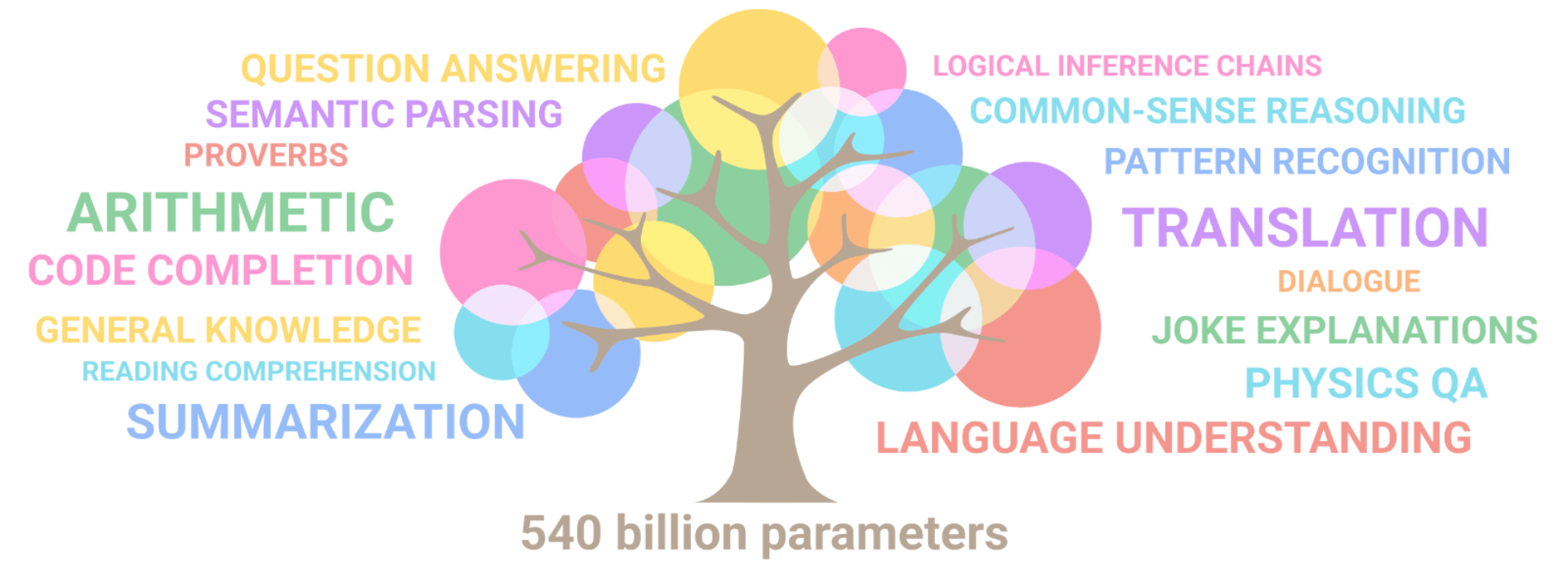

I-PaLM ibonise izakhono ezininzi zokuphumelela kwimisebenzi eyahlukeneyo enzima, kubandakanywa ukuqonda ulwimi kunye nokudala, imisebenzi enxulumene nekhowudi ye-arithmetic ye-multistep, ukucinga okuqhelekileyo, ukuguqulela, kunye nezinye ezininzi.

Ibonise amandla ayo okusombulula imiba entsonkothileyo isebenzisa iiseti ze-NLP zeelwimi ezininzi. I-PaLM inokusetyenziswa yimakethi yetekhnoloji yehlabathi jikelele ukwahlula unobangela kunye nesiphumo, indibaniselwano yengqiqo, imidlalo eyahlukileyo, kunye nezinye izinto ezininzi.

Isenokuvelisa iingcaciso ezinzulu kwimixholo emininzi isebenzisa i-multistep logic inference, ulwimi olunzulu, ulwazi lwehlabathi, kunye nobunye ubuchule.

UGoogle uye waphuhlisa njani i-algorithm ye-PaLM?

Ukusebenza kweGoogle kwiPaLM, iindlela zicwangciselwe ukulinganisa ukuya kwi-540 yeebhiliyoni zeeparamitha. Yamkelwa njengemodeli enye enokuthi isebenze ngokufanelekileyo nangempumelelo kuyo yonke imimandla emininzi. Iindlela kuGoogle zinikezelwe ekuphuhliseni ikhompyuter esasazwayo yee-accelerators.

I-PaLM yimodeli ye-decoder-transformer kuphela eqeqeshwe ngokusebenzisa inkqubo ye-Pathways. I-PaLM iphumelele ngempumelelo i-state-of-the-art intsebenzo ye-shot-shot kwimisebenzi emininzi, ngokutsho kweGoogle. I-PaLM isebenzise inkqubo ye-Pathways yokwandisa uqeqesho kwi-TPU-based based configuration system, eyaziwa ngokuba yi-6144 chips okokuqala.

Iseti yedatha yoqeqesho yemodeli yolwimi lwe-AI yenziwe ngumxube wesiNgesi kunye nezinye iiseti zeelwimi ezininzi. Ngesigama "esingenalahleko", siqulethe umxholo ophezulu wewebhu, iingxoxo, iincwadi, ikhowudi yeGitHub, iWikipedia, kunye nezinye ezininzi. Isigama esingalahlekiyo samkelwa ngokugcina isithuba esimhlophe kunye nokwaphula oonobumba be-Unicode abangekho kwisigama sibe ngamabhayithi.

I-PaLM yaphuhliswa yi-Google kunye ne-Pathways isebenzisa imodeli ye-architecture ye-transformer eqhelekileyo kunye noqwalaselo lwe-decoder olubandakanya i-SwiGLU Activation, i-parallel layers, i-RoPE embeddings, i-input-output embeddings, ingqalelo yemibuzo emininzi, kwaye akukho cala okanye isigama. I-PaLM, kwelinye icala, ilungele ukubonelela ngesiseko esiqinileyo se-Google kunye ne-Pathways 'imodeli yolwimi lwe-AI.

Iiparamitha ezisetyenziselwa ukuqeqesha i-PALM

Kunyaka ophelileyo, uGoogle uphehlelele iPathways, imodeli enye enokuqeqeshwa ukwenza amawaka, ukuba ayizizigidi, izinto-ebizwa ngokuba "yisizukulwana esilandelayo soyilo lwe-AI" kuba inokoyisa imida ekhoyo yokuqeqeshwa ukwenza into enye kuphela. . Kunokuba kwandiswe izakhono zeemodeli zangoku, iimodeli ezintsha zihlala zakhiwe ukusuka ezantsi ukuya phezulu ukuphumeza umsebenzi omnye.

Ngenxa yoko, baye benza amashumi amawaka eemodeli amashumi amawaka emisebenzi eyahlukeneyo. Lo ngumsebenzi odla ixesha kunye nobutyebi obuninzi.

UGoogle ubonakalise ngePathways ukuba imodeli enye inokusingatha imisebenzi eyahlukeneyo kwaye izobe kwaye idibanise iitalente zangoku ukufunda imisebenzi emitsha ngokukhawuleza nangokufanelekileyo.

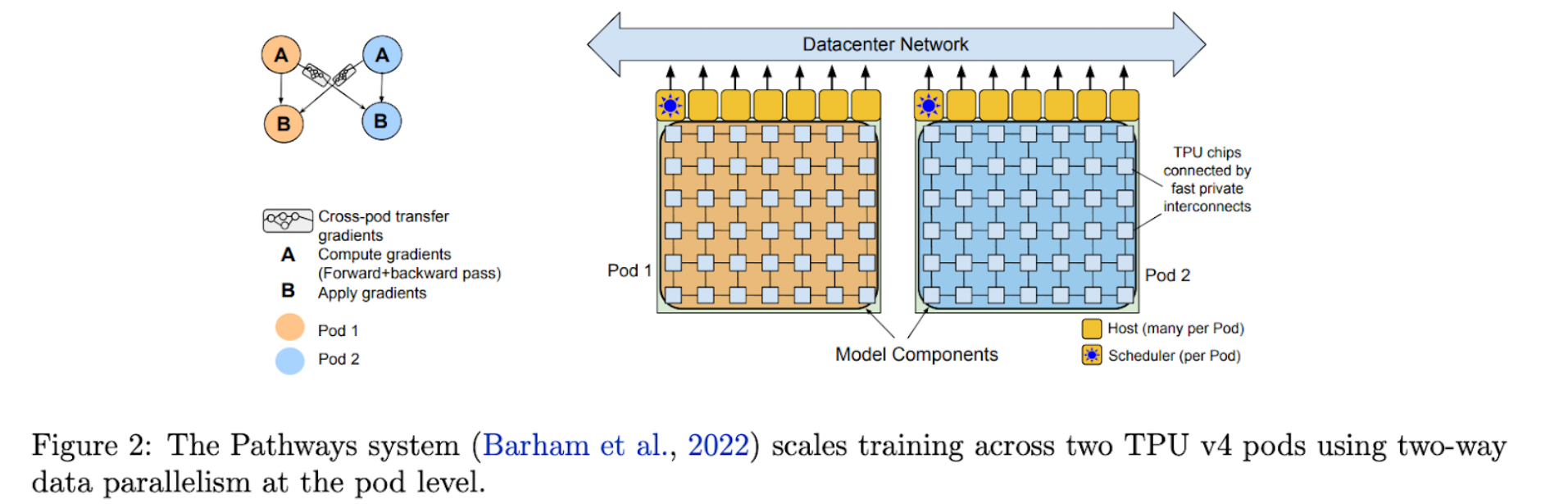

Imifuziselo ye-Multimodal ebandakanya umbono, ukuqonda kolwimi, kunye nokulungiswa kokuva zonke ngaxeshanye zinokuvulwa ngeendlela. I-Pathways Language Model (PaLM) ivumela ukuqeqeshwa kwemodeli enye kuzo zonke ii-TPU v4 iiPods enkosi kwimodeli yayo yeebhiliyoni ezingama-540.

I-PaLM, imodeli eshinyeneyo ye-decoder-only Transformer, igqwesa intsebenzo yemeko yobugcisa embalwa kuluhlu olubanzi lwemisebenzi. I-PaLM iqeqeshwa kwiiPods ezimbini zeTPU v4 ezidityaniswe ngenethiwekhi yeziko ledatha (DCN).

Ithatha inzuzo yazo zombini imodeli kunye nokuhambelana kwedatha. Abaphandi baqeshe iiprosesa ze-3072 TPU v4 kwiPod nganye yePaLM, edibaniswe nemikhosi ye-768. Ngokwabaphandi, olu lolona lungelelwaniso lukhulu lweTPU okwangoku lubhengeziweyo, lubavumela ukuba bakhulise uqeqesho ngaphandle kokusebenzisa ukuhambelana kwemibhobho.

Ukufakwa kwemibhobho yinkqubo yokuqokelela imiyalelo kwi-CPU ngombhobho ngokubanzi. Iileya zemodeli zahlulwe ngokwezigaba ezinokusetyenzwa ngokunxuseneyo kusetyenziswa imodeli yombhobho iparallelism (okanye iparallelism yombhobho).

Imemori yokuvula ithunyelwa kwinyathelo elilandelayo xa inqanaba elinye ligqibezela ukudlula phambili kwi-micro-batch. Iigradient zithunyelwa ngasemva xa inqanaba elilandelayo ligqibezela ukusasazwa ngasemva.

PaLM Breakthrough Amakhono

I-PaLM ibonisa izakhono zokuqhawula umhlaba kuluhlu lwemisebenzi enzima. Nantsi imizekelo emininzi:

1. Ukudalwa kolwimi nokuqonda

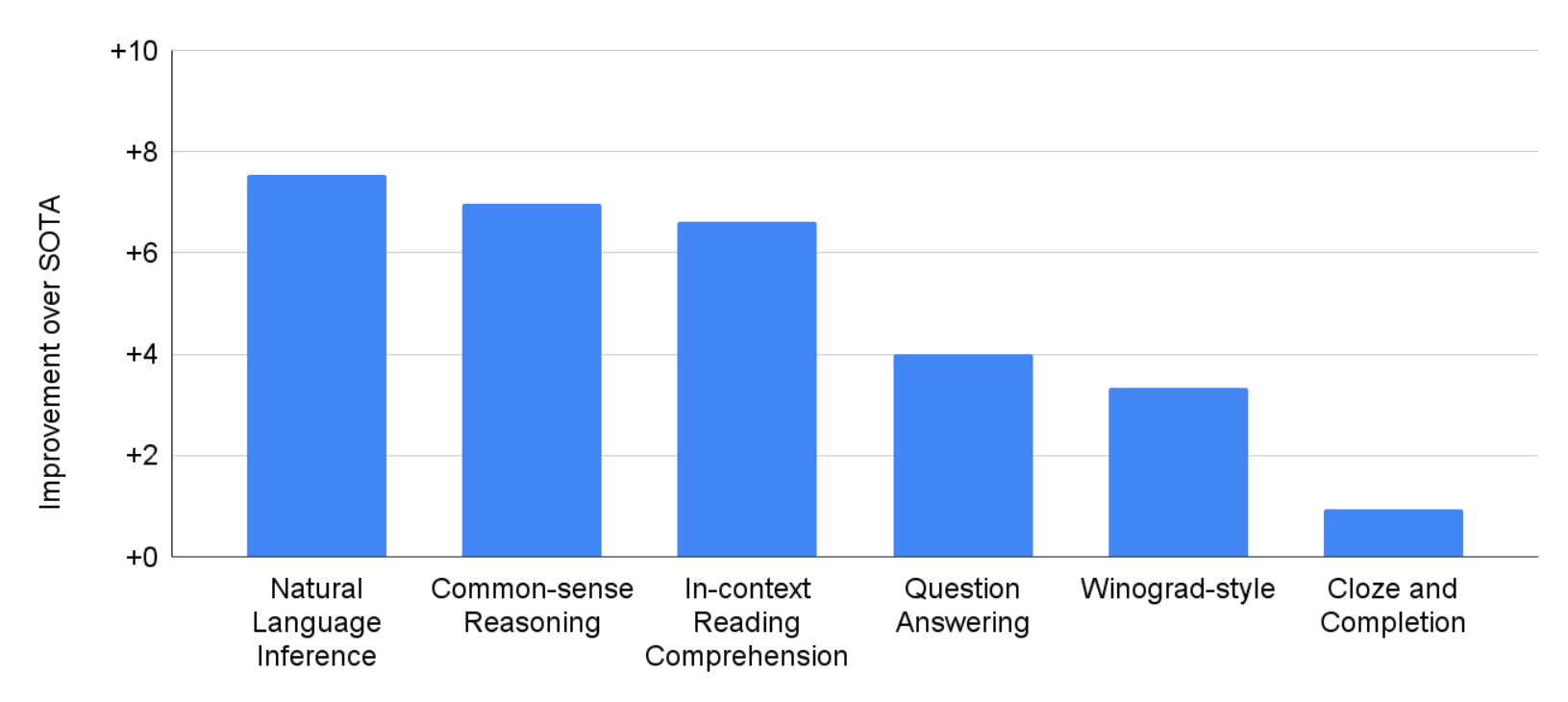

I-PaLM yavavanywa kwimisebenzi engama-29 eyahlukeneyo ye-NLP ngesiNgesi.

Kwisiseko sokudubula okumbalwa, i-PaLM 540B iphumelele iimodeli ezinkulu zangaphambili ezifana ne-GLaM, i-GPT-3, i-Megatron-Turing NLG, i-Gopher, i-Chinchilla, kunye ne-LaMDA kwimisebenzi ye-28 ye-29, kubandakanywa imisebenzi evulekileyo ye-open-domain-incwadi ehlukeneyo yokuphendula imibuzo. , imisebenzi yokuvala kunye nokugqibezela isivakalisi, imisebenzi yesimbo seWinograd, imisebenzi yokuqonda ukufunda kumxholo, imisebenzi yokuqiqa eqhelekileyo, imisebenzi yeSuperGLUE, kunye nokuthelekelela kwendalo.

Kwimisebenzi emininzi ye-BIG-bench, i-PaLM ibonisa ukutolika kolwimi lwendalo kunye nezakhono zokuvelisa. Umzekelo, imodeli inokwahlula phakathi kwesizathu kunye nesiphumo, ukuqonda indibaniselwano yengqikelelo kwiimeko ezithile, kunye nokuqikelela imuvi kwi-emoji. Nangona nje i-22% yekhophusi yoqeqesho ingeyiyo isiNgesi, i-PaLM iqhuba kakuhle kwiibenchmarks ze-NLP zeelwimi ezininzi, kubandakanywa ukuguqulela, ukongeza kwimisebenzi ye-NLP yesiNgesi.

2. Ukuqiqa

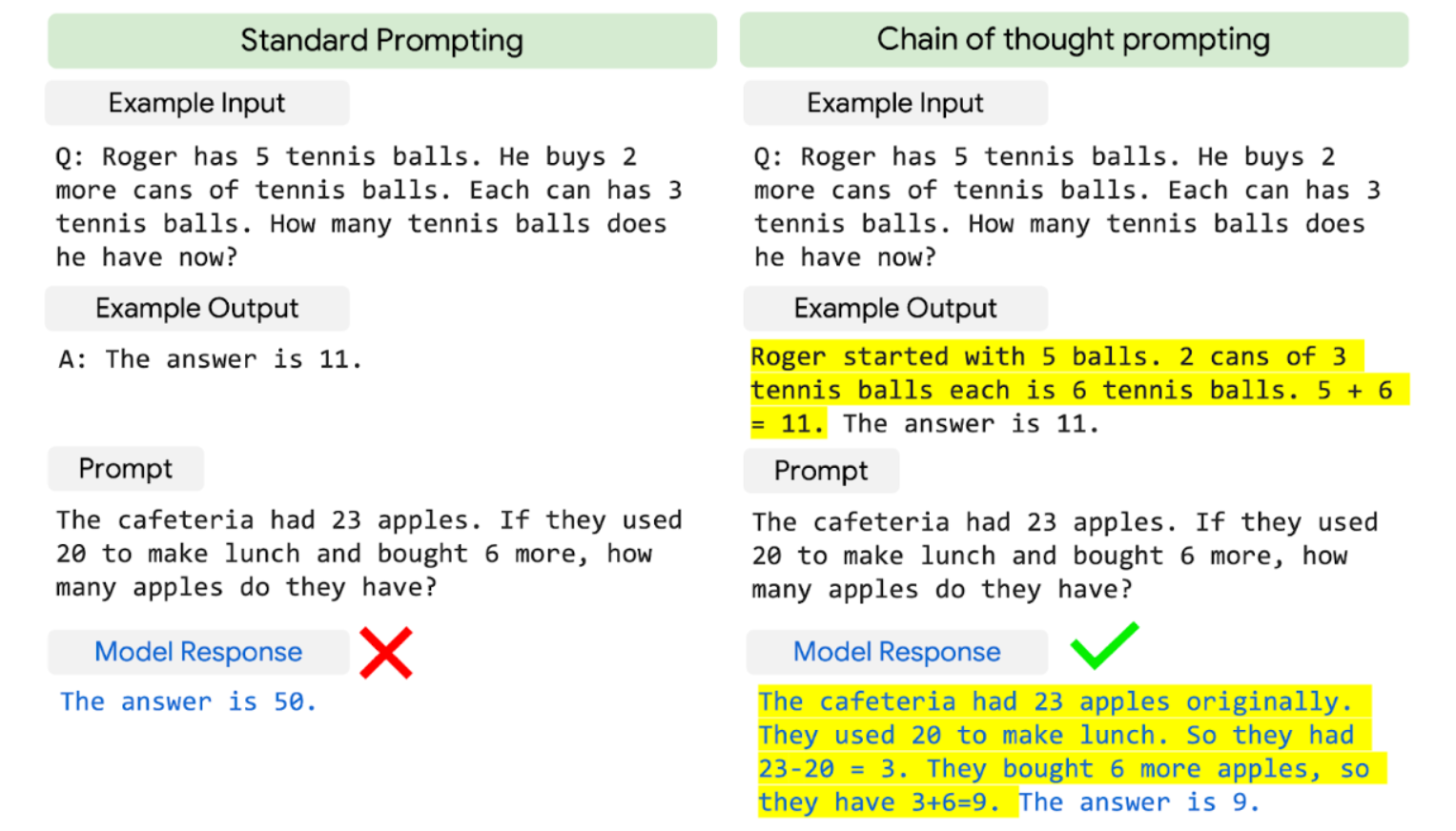

I-PaLM idibanisa ubungakanani bemodeli kunye nekhonkco-engcinga ekhuthaza ukubonisa izakhono zokuphumelela kwimingeni yokuqiqa efuna i-multistep arithmetic okanye ingqiqo ye-commonsense.

Ii-LLM zangaphambili, ezifana neGopher, zizuze ngaphantsi kubungakanani bemodeli ngokwemigaqo yokuphucula ukusebenza. I-PaLM 540B ene-chain-of-ingcinga iqhube kakuhle kwii-arithmetic ezintathu kunye neeseti ezimbini zokucinga ze-commonsense.

I-PaLM igqithise amanqaku angaphambili angcono kakhulu e-55%, efunyenwe ngokulungelelanisa imodeli ye-GPT-3 175B kunye nesethi yoqeqesho lweengxaki ze-7500 kunye nokudibanisa kunye ne-calculator yangaphandle kunye ne-verifier ukusombulula i-58 yeepesenti zemiba kwi-GSM8K, a Umlinganiselo wamawaka emibuzo enzima yezibalo kwibakala lesikolo usebenzisa u-8-shot prompting.

Eli nqaku litsha liphawuleka ngakumbi njengoko lisondela kumyinge wama-60% wemiqobo efunyanwa ngabaneminyaka eli-9-12 ubudala. Iyakwazi nokuphendula kwiziqhulo zokuqala ezingafumanekiyo kwi-intanethi.

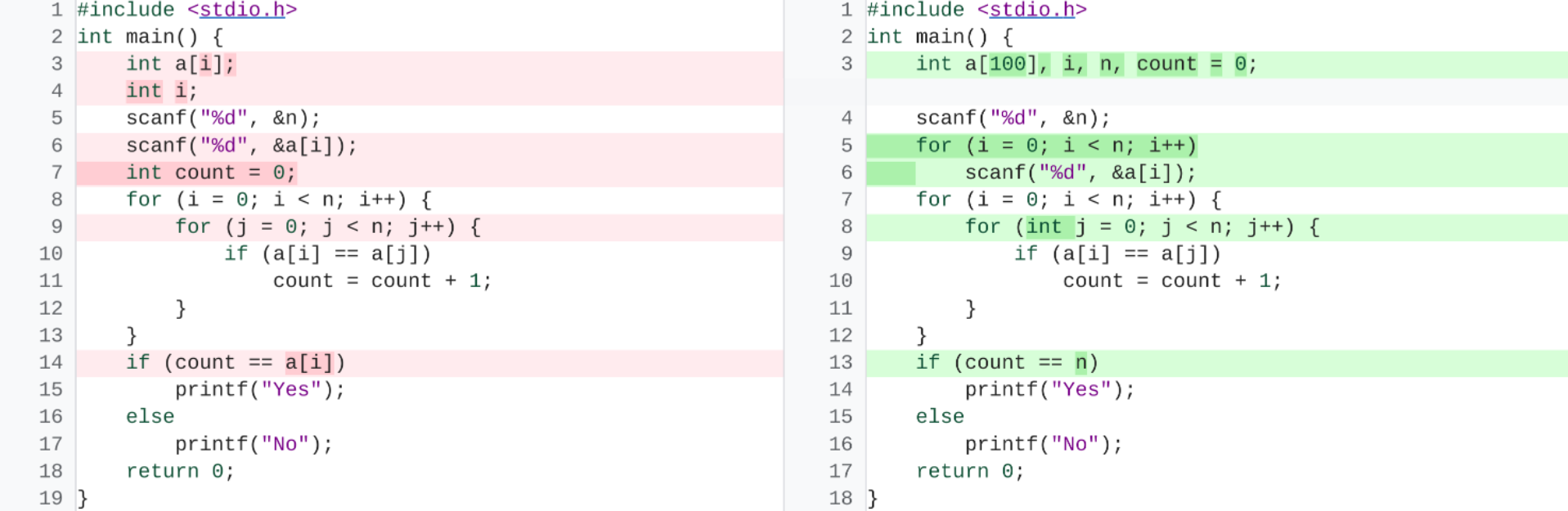

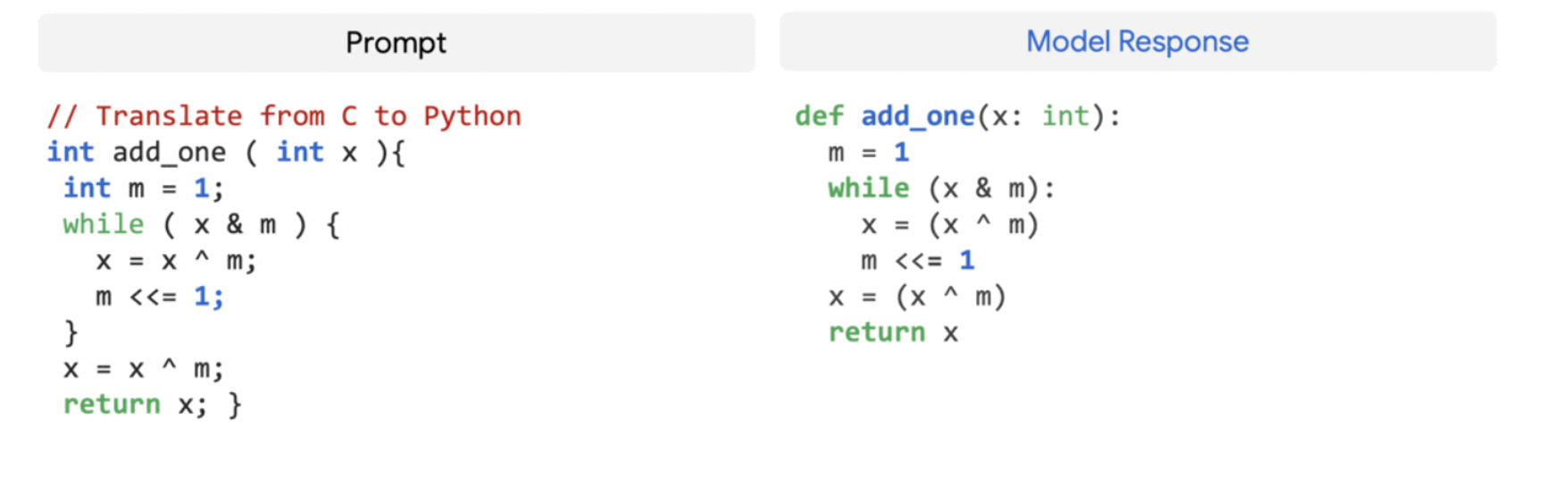

3. Ukuveliswa kweKhowudi

Ii-LLMs nazo zibonakaliswe ukuba ziqhuba kakuhle kwimisebenzi yekhowudi, kuquka ukuvelisa ikhowudi esuka kwinkcazo yolwimi lwendalo (isicatshulwa-kwikhowudi), ikhowudi yokuguqulela phakathi kweelwimi, kunye nokusombulula iimpazamo zokudibanisa. Ngaphandle kokuba nekhowudi ye-5% kuphela kwidathasethi yoqeqesho lwangaphambili, i-PaLM 540B yenza kakuhle kwiikhowudi zombini kunye nemisebenzi yolwimi lwendalo kwimodeli enye.

Ukusebenza kwayo okumbalwa kuyamangalisa, njengoko ihambelana neCodex 12B elungiswe kakuhle ngelixa iqeqeshwa ngamaxesha angama-50 ngaphantsi kwekhowudi yePython. Oku kufunyanisiweyo kubuya neziphumo zangaphambili zokuba iimodeli ezinkulu zinokusebenza ngakumbi kuneemodeli ezincinci kuba zinokugqithisela ngokufanelekileyo ukufunda kwiintlobo ezininzi. Iilwimi zenkqubo kunye nedatha yolwimi olulula.

isiphelo

I-PaLM ibonisa amandla enkqubo ye-Pathways yokukala ukuya kumawaka eeprosesa ze-accelerator ngaphezulu kwee-TPU ezimbini ze-v4 Pods ngokuqeqesha ngokufanelekileyo imodeli yeparamitha ye-540 yebhiliyoni kunye neresiphi efundiweyo, esekwe kakuhle yemodeli eshinyeneyo yeTransformer kuphela.

Iphumeza ukusebenza okumbalwa kokudubula kuluhlu lwenkqubo yolwimi lwendalo, ukuqiqa, kunye nemingeni yekhowudi ngokutyhala imida yesikali somzekelo.

Shiya iMpendulo