I-Natural Language Processing (NLP) iguqule indlela esisebenzisana ngayo noomatshini. Ngoku, iiapps zethu kunye nesoftware inokusebenza kwaye iqonde ulwimi lwabantu.

Njengoqeqesho lobukrelekrele bokwenziwa, i-NLP igxile kunxibelelwano lwendalo phakathi kweekhompyuter nabantu.

Inceda oomatshini ukuba bahlalutye, baqonde, kwaye badibanise ulwimi lwabantu, bavule intaphane yezicelo ezifana nokuqondwa kwentetho, ukuguqulelwa komatshini, Uhlalutyo lweemvakalelo, kunye nee-chatbots.

Yenze uphuhliso olukhulu kwiminyaka yakutshanje, ivumela oomatshini ukuba bangaqondisisi ulwimi kuphela kodwa balusebenzise ngokuyilayo nangokufanelekileyo.

Kweli nqaku, siza kujonga iimodeli ezahlukeneyo zolwimi lwe-NLP. Ke, landela, kwaye masifunde ngale mizekelo!

1. I-BERT

I-BERT (i-Bidirectional Encoder Representations ezivela kwiTransformers) ngumzekelo wolwimi lweNdalo we-Natural Language Processing (NLP). Yenziwe kwi-2018 ngu-g kwaye isekelwe kwi-architecture yeTransformer, a inethiwekhi yomnatha yakhelwe ukutolika igalelo elilandelelanayo.

I-BERT yimodeli yolwimi esele iqeqeshwe, nto leyo ethetha ukuba iqeqeshelwe imithamo emikhulu yedatha yokubhaliweyo ukuqonda iipateni zolwimi lwendalo kunye nolwakhiwo.

I-BERT yimodeli yokuqondisa kabini, okuthetha ukuba inokuqonda umxholo kunye nentsingiselo yamagama ngokuxhomekeke kumabinzana awo angaphambili nalandelayo, iyenze ibe nempumelelo ngakumbi ekuqondeni intsingiselo yezivakalisi ezintsonkothileyo.

Ingaba isebenza kanjani?

Ukufunda okungajongwanga kusetyenziselwa ukuqeqesha i-BERT ngezixa ezikhulu zedatha yesicatshulwa. I-BERT ifumana ukukwazi ukubona amagama angekhoyo kwisivakalisi okanye ukuhlela izivakalisi ngexesha loqeqesho.

Ngoncedo lwalo qeqesho, i-BERT inokuvelisa ukufakwa kwekhwalithi ephezulu enokuthi isetyenziswe kwimisebenzi eyahlukeneyo ye-NLP, kubandakanywa ukuhlalutya kweemvakalelo, ukuhlelwa kweetekisi, ukuphendula imibuzo, kunye nokunye.

Ukongeza, i-BERT inokuphuculwa kwiprojekthi ethile ngokusebenzisa i-dataset encinci ukugxila kulo msebenzi ngokukodwa.

Isetyenziswa phi iBert?

I-BERT isetyenziswa rhoqo kuluhlu olubanzi lwezicelo ezidumileyo zeNLP. UGoogle, umzekelo, uye wasebenzisa ukunyusa ukuchaneka kweziphumo zenjini yokukhangela, ngelixa i-Facebook isetyenzisile ukuphucula i-algorithms yokucebisa.

I-BERT ikwasetyenzisiwe kuhlalutyo lweemvakalelo ze-chatbot, ukuguqulelwa koomatshini, kunye nokuqonda kolwimi lwendalo.

Ukongeza, i-BERT iqeshwe kwiindawo ezininzi uphando lwezifundo amaphepha okuphucula ukusebenza kweemodeli ze-NLP kwimisebenzi eyahlukeneyo. Ngokubanzi, i-BERT ibe sisixhobo esiyimfuneko kwizifundiswa kunye neengcali ze-NLP, kwaye impembelelo yayo kuqeqesho kuqikelelwa ukuba iya kwanda ngakumbi.

2. URoberta

I-RoBERTa (i-Robustly Optimized BERT Approach) ngumzekelo wolwimi wokusetyenzwa kolwimi lwendalo ekhutshwe nguFacebook AI ngo-2019. Luguqulelo oluphuculweyo lwe-BERT olujoliswe ukoyisa ezinye zeentsilelo zemodeli ye-BERT yoqobo.

I-RoBERTa yaqeqeshwa ngendlela efana ne-BERT, ngaphandle kokuba i-RoBERTa isebenzisa idatha yoqeqesho ngakumbi kwaye iphucula inkqubo yoqeqesho ukuze ifumane ukusebenza okuphezulu.

I-RoBERTa, njenge-BERT, imodeli yolwimi eqeqeshwe kwangaphambili enokuthi ilungiswe kakuhle ukufezekisa ukuchaneka okuphezulu kumsebenzi onikiweyo.

Ingaba isebenza kanjani?

I-RoBERTa isebenzisa isicwangciso sokufunda esizilawulayo ukuqeqesha kubuninzi bedatha yesicatshulwa. Ifunda ukuqikelela amagama angekhoyo kwizivakalisi kwaye ihlele amabinzana ngokwamaqela ahlukeneyo ngexesha loqeqesho.

I-RoBERTa ikwasebenzisa iindlela ezininzi zoqeqesho ezintsonkothileyo, ezinje ngokugquma okuguquguqukayo, ukunyusa umthamo wemodeli ukwenza ngokubanzi idatha entsha.

Ngaphaya koko, ukwandisa ukuchaneka kwayo, i-RoBERTa isebenzisa ubuninzi bedatha kwimithombo emininzi, kuquka iWikipedia, iCommon Crawl, kunye neBooksCorpus.

Singayisebenzisa phi iRoBERTa?

URoberta usetyenziswa ngokuqhelekileyo ukuhlalutya imvakalelo, ukwahlulahlula okubhaliweyo, into ekhoyo ukuchongwa, ukuguqulelwa koomatshini, kunye nokuphendula imibuzo.

Ingasetyenziselwa ukukhupha ingqiqo efanelekileyo kwidatha yesicatshulwa esingacwangciswanga njenge Imidiya yokuncokola, uphononongo lwabathengi, amanqaku eendaba, kunye neminye imithombo.

I-RoBERTa isetyenziswe kwizicelo ezithe ngqo ngakumbi, ezifana nesishwankathelo samaxwebhu, ukudala umbhalo, kunye nokuqatshelwa kwentetho, ukongeza kule misebenzi yesiqhelo ye-NLP. Ikwasetyenziselwe ukuphucula ii-chatbots, abancedisi benyani, kunye nezinye iingxoxo ze-AI iinkqubo ezichanekileyo.

3. I-OpenAI ye-GPT-3

I-GPT-3 (I-Generative Pre-trained Transformer 3) yimodeli yolwimi lwe-OpenAI evelisa ukubhala okufana nomntu kusetyenziswa ubuchule bokufunda nzulu. I-GPT-3 yenye yeemodeli ezinkulu zolwimi ezakha zakhiwa, kunye ne-175 yeebhiliyoni zeeparamitha.

Imodeli yaqeqeshwa kuluhlu olubanzi lwedatha yesicatshulwa, kubandakanywa iincwadi, amaphepha, kunye namaphepha ewebhu, kwaye ngoku inokudala umxholo kwiindidi ezahlukeneyo.

Ingaba isebenza kanjani?

I-GPT-3 yenza isicatshulwa kusetyenziswa indlela yokufunda engajongwanga. Oku kuthetha ukuba imodeli ayifundiswanga ngabom ukwenza nawuphi na umsebenzi othile, kodwa endaweni yoko ifunda ukwenza okubhaliweyo ngokuqaphela iipateni kwimithamo emikhulu yedatha yokubhaliweyo.

Ngokuyiqeqeshela kwiiseti zedatha ezincinci, ezijongene nomsebenzi othile, imodeli inokulungiswa kakuhle kwimisebenzi ethile efana nokugqitywa kokubhaliweyo okanye uhlalutyo lweemvakalelo.

Iindawo zokuSebenzisa

I-GPT-3 inezicelo ezininzi kwinkalo yokusetyenzwa kolwimi lwendalo. Ukugqitywa kombhalo, ukuguqulelwa kolwimi, uhlalutyo lweemvakalelo, kunye nezinye izicelo zinokwenzeka ngemodeli. I-GPT-3 iye yasetyenziselwa ukwenza imibongo, amabali eendaba, kunye nekhowudi yekhompyutha.

Esinye sezona zicelo ze-GPT-3 ezinokubakho kukwenza ii-chatbots kunye nabancedisi benyani. Ngenxa yokuba imodeli inokudala umbhalo ofana nomntu, ifaneleke kakhulu usetyenziso lwencoko.

I-GPT-3 iye yasetyenziselwa ukuvelisa umxholo olungiselelwe iiwebhusayithi kunye namaqonga eendaba ezentlalo, kunye nokunceda kuhlalutyo lwedatha kunye nophando.

4. GPT-4

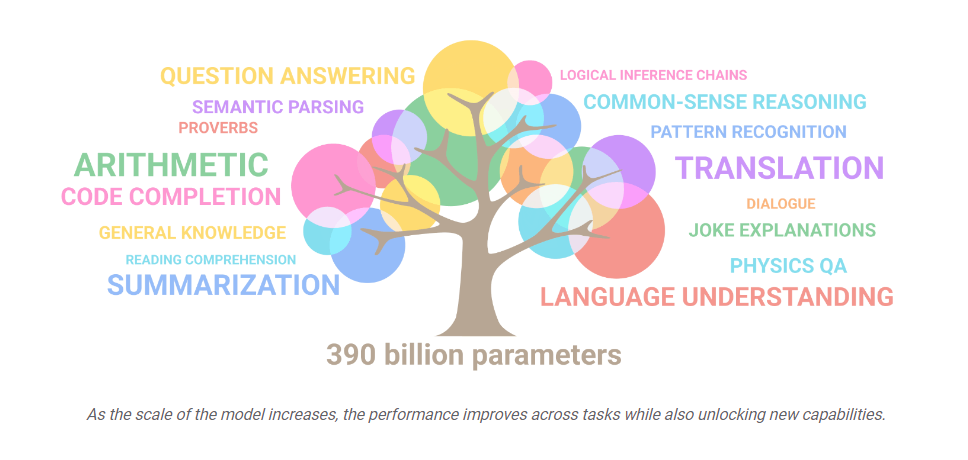

I-GPT-4 yeyona modeli yolwimi yamva nje nephucukileyo kuthotho lwe-OpenAI ye-GPT. Ngeeparamitha ezimangalisayo ze-10 yetriliyoni, kuqikelelwa ukuba iya kudlula kwaye igqwese eyandulelayo, i-GPT-3, kwaye ibe yenye yezona modeli ze-AI ezinamandla kwihlabathi.

Ingaba isebenza kanjani?

I-GPT-4 yenza okubhaliweyo kolwimi lwendalo kusetyenziswa ubucukubhede ii-algorithms ezinzulu zokufunda. Iqeqeshwe kwiseti yedatha yetekisi enkulu equka iincwadi, iijenali, kunye namaphepha ewebhu, evumela ukuba idale umxholo kuluhlu olubanzi lwezihloko.

Ngaphaya koko, ngokuyiqeqeshela kwiiseti zedatha ezincinci, ezingqamene nomsebenzi othile, i-GPT-4 inokulungiswa kakuhle kwimisebenzi ethile efana nokuphendula imibuzo okanye ukushwankathela.

Iindawo zokuSebenzisa

Ngenxa yobukhulu bayo obukhulu kunye nobuchule obuphezulu, i-GPT-4 ibonelela ngeentlobo ezahlukeneyo zezicelo.

Olunye lolona setyenziso luthembisayo kukusetyenzwa kolwimi lwendalo, apho lunokuthi lusetyenziswe khona phuhlisa ii-chatbots, abancedisi, kunye neenkqubo zokuguqulela iilwimi ezikwaziyo ukuvelisa iimpendulo zolwimi lwendalo eziphantse zahluke kwezo ziveliswa ngabantu.

I-GPT-4 isenokusetyenziswa kwezemfundo.

Ingqikelelo inokusetyenziselwa ukuphuhlisa iinkqubo zokufundisa ezikrelekrele ezikwaziyo ukuziqhelanisa nesimbo sokufunda somfundi kunye nokubonelela ngengxelo yomntu ngamnye kunye noncedo. Oku kunokunceda ekuphuculeni umgangatho wemfundo nokwenza ukufunda kufikeleleke ngakumbi kuye wonke umntu.

5. XLNet

I-XLNet yimodeli yolwimi eyenziwe ngo-2019 yiYunivesithi yaseCarnegie Mellon kunye nabaphandi bakaGoogle be-AI. Uyilo lwayo lusekwe kuyilo lwetransformer, ekwasetyenziswa kwi-BERT kunye nezinye iimodeli zolwimi.

I-XLNet, kwelinye icala, inikezela ngesicwangciso-qhinga soguqulo lwaphambi koqeqesho oluvumela ukuba igqwese eminye imifuziselo kwimisebenzi eyahlukeneyo yolwimi lwendalo.

Ingaba isebenza kanjani?

I-XLNet yadalwa kusetyenziswa indlela yolwimi olubuyisela umva ngokuzenzekela, equka ukuqikelela igama elilandelayo kulandelelwano lweteksti olusekelwe kwezi zandulelayo.

I-XLNet, kwelinye icala, yamkela indlela yokwahlula-hlula evavanya zonke iimvumelwano ezinokubakho zamagama kwibinzana, ngokuchaseneyo neminye imifuziselo yolwimi esebenzisa indlela ekhohlo ukuya ekunene okanye ekunene ukuya ekhohlo. Oku kuyenza ikwazi ukubamba unxulumano lwamagama exesha elide kwaye yenza uqikelelo oluchane ngakumbi.

I-XLNet idibanisa ubuchule obuntsonkothileyo obufana nokufakwa kweekhowudi kwindawo kunye nendlela yokuphinda yenqanaba lecandelo ukongeza kwiqhinga lotshintsho lwangaphambili loqeqesho.

Ezi zicwangciso-qhinga zenza igalelo ekusebenzeni komzekelo ngokubanzi kwaye ziyenze ikwazi ukusingatha uluhlu olubanzi lwemisebenzi yokulungiswa kolwimi lwendalo, enjengoguqulo lolwimi, uhlalutyo lweemvakalelo, kunye nokuchongwa kwequmrhu.

IiNdawo zokuSebenzisa i-XLNet

Iimpawu ezintsonkothileyo kunye nokulungelelaniswa kwe-XLNet zenza ukuba ibe sisixhobo esisebenzayo kuluhlu olubanzi lwezicelo zokusetyenzwa kolwimi lwendalo, kubandakanya ii-chatbots kunye nabancedisi benyani, ukuguqulelwa kweelwimi, kunye nohlalutyo lweemvakalelo.

Uphuhliso lwayo oluqhubekayo kunye nokufakwa kunye nesoftware kunye neeapps ngokuqinisekileyo kuya kukhokelela kwiimeko ezinomdla ngakumbi kwixesha elizayo.

6. ELECTRA

I-ELECTRA yimodeli yokusetyenzwa kolwimi lwendalo eyenziwe ngabaphandi bakaGoogle. Imele "Ukufunda ngokuLungileyo i-Encoder Ehlela iToken Replacement Ngokuchanekileyo" kwaye idume ngokuchaneka kwayo okungaqhelekanga kunye nesantya.

Ingaba isebenza kanjani?

I-ELECTRA isebenza ngokutshintsha inxalenye yeempawu zolandelelwano lwesicatshulwa kunye namathokheni avelisiweyo. Injongo yale modeli kukuqikelela ngokufanelekileyo ukuba ithokheni nganye yokutshintshwa isemthethweni okanye yeyomgunyathi. I-ELECTRA ifunda ukugcina unxulumano lomxholo phakathi kwamagama kulandelelwano lwesicatshulwa ngokufanelekileyo ngakumbi ngenxa yoko.

Ngaphaya koko, ngenxa yokuba i-ELECTRA idala iithokheni ezingeyonyani endaweni yokufihla ezoqobo, inokusebenzisa iiseti zoqeqesho ezinkulu kakhulu kunye namaxesha oqeqesho ngaphandle kokufumana inkxalabo yokugqwesa efanayo eyenziwa ziimodeli zolwimi ezigqunyiweyo eziqhelekileyo.

Iindawo zokuSebenzisa

I-ELECTRA inokusetyenziselwa uhlalutyo lweemvakalelo, olubandakanya ukuchonga ithoni yeemvakalelo yesicatshulwa.

Ngobuchule bayo bokufunda kuzo zozibini izicatshulwa ezigqunyiweyo kunye nezingatyhilekanga, i-ELECTRA inokusetyenziswa ukwenza iimodeli zocazululo ezichanekileyo ezinokuthi ziqonde ngcono ubucukubhede bolwimi kwaye zinike ukuqonda okunentsingiselo ngakumbi.

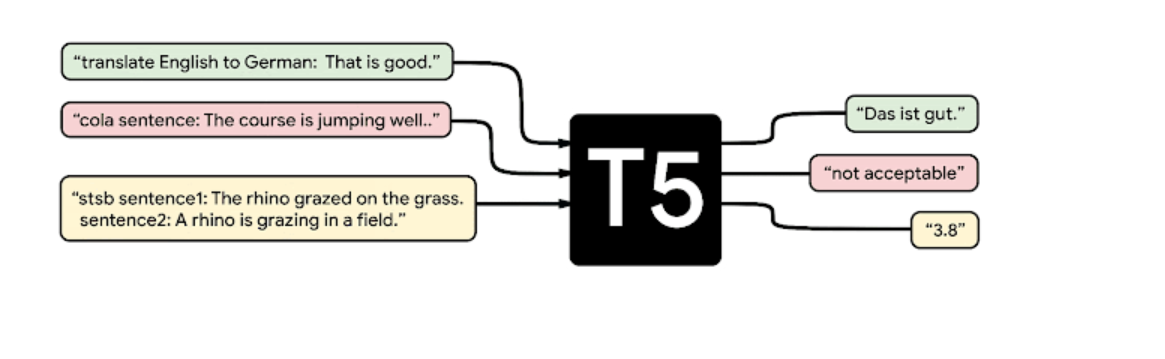

7.T5

I-T5, okanye i-Text-to-Text Transformer Transformer, yimodeli yolwimi olusekelwe kulwimi lwe-Google AI. Yenzelwe ukuphumeza imisebenzi eyahlukeneyo yolwimi lwendalo ngokuguqulela ngokuguquguqukayo umbhalo wegalelo kwimveliso yokubhaliweyo.

Ingaba isebenza kanjani?

I-T5 yakhelwe kwi-architecture yeTransformer kwaye yaqeqeshwa kusetyenziswa ukufunda okungajongwanga kubungakanani obukhulu bedatha yokubhaliweyo. U-T5, ngokungafaniyo nemifuziselo yolwimi yangaphambili, uqeqeshelwa imisebenzi eyahlukeneyo, ebandakanya ukuqonda ulwimi, ukuphendula imibuzo, ukushwankathela, kunye noguqulo.

Oku kwenza ukuba i-T5 yenze imisebenzi emininzi ngokulungisa kakuhle imodeli kwigalelo elincinane lomsebenzi othile.

Isebenzisa phi i-T5?

I-T5 inezicelo ezininzi ezinokubakho kulungiso lolwimi lwendalo. Ingasetyenziselwa ukwenza ii-chatbots, abancedisi benyani, kunye nezinye iinkqubo ze-AI zencoko ezikwaziyo ukuqonda nokuphendula kwigalelo lolwimi lwendalo. I-T5 isenokusetyenziselwa imisebenzi efana nokuguqulela ulwimi, ukushwankathela, kunye nokugqibezela umbhalo.

I-T5 ibonelelwe ngomthombo ovulekileyo nguGoogle kwaye yamkelwe ngokubanzi luluntu lwe-NLP kwiintlobo ezahlukeneyo zezicelo ezifana nokuhlelwa kweetekisi, ukuphendula imibuzo, kunye nokuguqulelwa komatshini.

8. I-PALM

I-PaLM (iModeli yoLwimi lweeNdlela) yimodeli yolwimi oluphambili olwenziwe nguLwimi lweGoogle AI. Kujoliswe ekuphuculeni ukusebenza kwemizekelo yolwimi lwendalo ukuzalisekisa imfuno ekhulayo yemisebenzi entsonkothileyo yolwimi.

Ingaba isebenza kanjani?

Ngokufana nezinye iimodeli zolwimi ezithandwa kakhulu njenge-BERT kunye ne-GPT, i-PaLM yimodeli esekwe kwi-transformer. Nangona kunjalo, uyilo lwayo kunye nendlela yoqeqesho iyenza yahluke kwezinye iimodeli.

Ukuphucula izakhono zokusebenza kunye nezakhono ngokubanzi, i-PaLM iqeqeshelwa ukusebenzisa i-paradigm yokufunda imisebenzi emininzi eyenza ukuba imodeli ifunde ngaxeshanye kwimingeni emininzi.

Siyisebenzisa phi iPALM?

I-Palm ingasetyenziselwa imisebenzi eyahlukeneyo ye-NLP, ngakumbi leyo ibiza ukuqonda okunzulu kolwimi lwendalo. Iluncedo kuhlalutyo lweemvakalelo, ukuphendula imibuzo, imodeli yolwimi, ukuguqulelwa komatshini, kunye nezinye izinto ezininzi.

Ukuphucula izakhono zokucwangcisa ulwimi kwiinkqubo ezahlukeneyo kunye nezixhobo ezifana nee-chatbots, abancedisi abangabonakaliyo, kunye neenkqubo zokuqaphela ilizwi, zinokongezwa kuzo.

Ngokubanzi, i-PaLM iteknoloji ethembisayo enoluhlu olubanzi lwezicelo ezinokwenzeka ngenxa yomthamo wayo wokunyusa amandla okulungisa ulwimi.

isiphelo

Okokugqibela, ukusetyenzwa kolwimi lwendalo (NLP) kuyiguqule indlela esisebenzisana ngayo nobuchwepheshe, kusivumela ukuba sithethe noomatshini ngendlela efana nomntu.

I-NLP ikhule ichanekile kwaye isebenza kakuhle kunanini na ngaphambili ngenxa yenkqubela phambili yamva nje yokufunda umatshini, ngokuphawulekayo kulwakhiwo lweemodeli zeelwimi ezinkulu ezifana ne-GPT-4, i-RoBERTa, i-XLNet, i-ELECTRA, kunye ne-PaLM.

Njengoko i-NLP iqhubela phambili, sinokulindela ukubona imifuziselo yeelwimi enamandla ngakumbi nephucukileyo ivela, inamandla okuguqula indlela esinxibelelana ngayo nobuchwephesha, ukunxibelelana nabanye, kunye nokuqonda ukuntsokotha kolwimi lwabantu.

Shiya iMpendulo