Mahombe neural network akadzidziswa kucherechedzwa kwemutauro uye chizvarwa aratidza mhedzisiro yakanaka mumabasa akasiyana mumakore achangopfuura. GPT-3 yakaratidza kuti mhando dzemitauro mikuru (LLMs) dzinogona kushandiswa pakudzidza zvishoma-shoma uye kuwana mibairo yakanaka pasina kuda data rakawanda-rakanangana nebasa kana kushandura maparamita emhando.

Google, iyo Silicon Valley tech behemoth, yakaunza PaLM, kana Pathways Mutauro Model, kuindasitiri yepasi rose tekinoroji sechizvarwa chinotevera AI-mutauro modhi. Google yaisa imwe nyowani chakagadzirwa njere dhizaini muPaLM iine chinangwa chekuvandudza mhando yeAI-mutauro modhi.

Mune ino post, isu tichaongorora iyo Palm algorithm zvakadzama, kusanganisira maparamita anoshandiswa kuidzidzisa, nyaya yainogadzirisa, uye zvimwe zvakawanda.

Chii Google's PaLM algorithm?

Pathways Mutauro Model chii PaLM inomirira. Iyi algorithm nyowani yakagadziridzwa neGoogle kuitira kusimbisa iyo Pathways AI architecture. Chinangwa chikuru chechimiro ndechekuita miriyoni yezviitiko zvakasiyana panguva imwe chete.

Izvi zvinosanganisira zvese kubva pakududzira dhata yakaoma kusvika kune inodonhedza kufunga. PaLM ine simba rekupfuura yazvino AI mamiriro-e-iyo-pamwe nevanhu mumutauro uye kufunga mabasa.

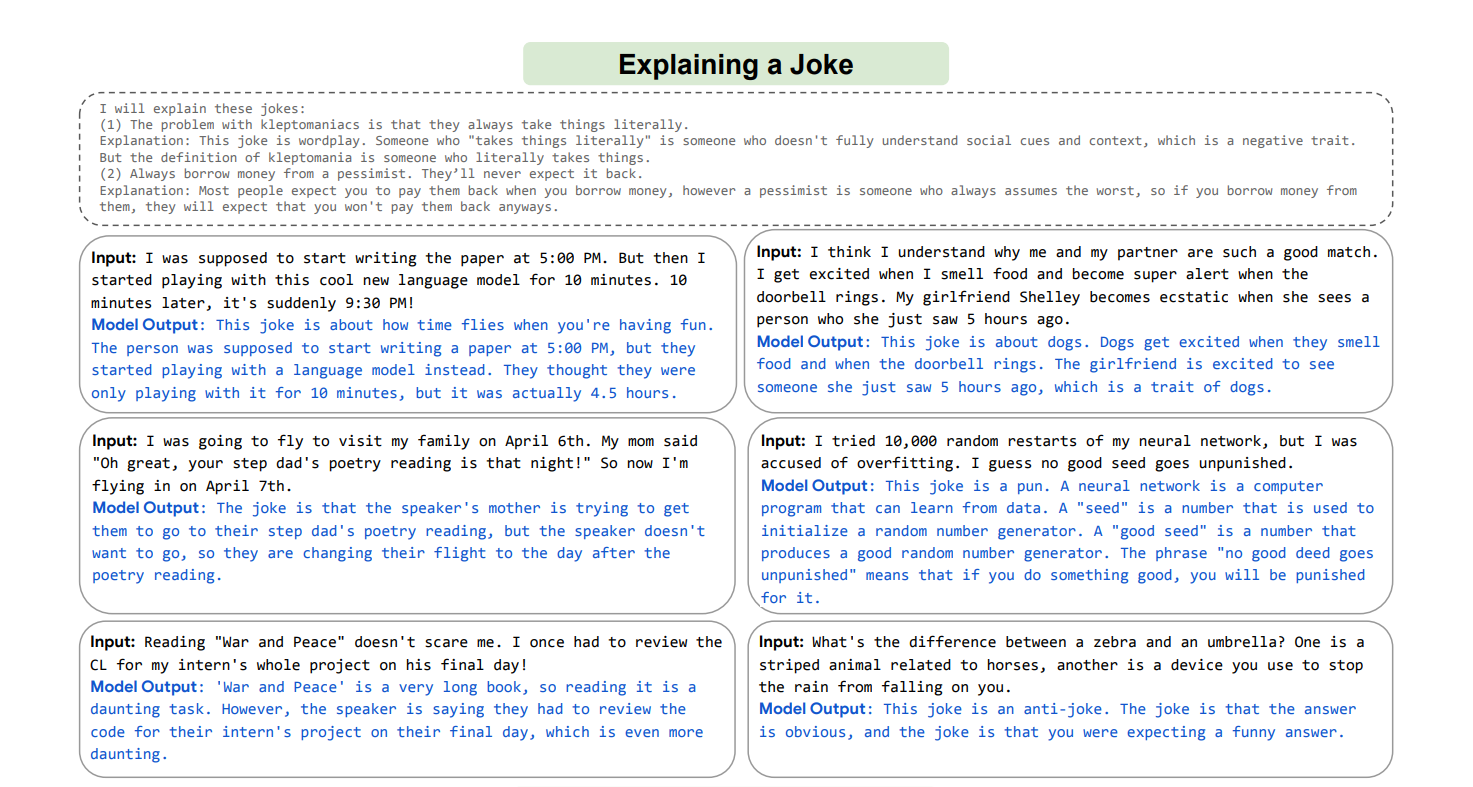

Izvi zvinosanganisira Few-Shot Learning, iyo inotevedzera madzidzisiro anoita vanhu zvinhu zvitsva uye kubatanidza zvimedu zvakasiyana-siyana zveruzivo kugadzirisa matambudziko matsva asati amboonekwa, nerubatsiro rwemuchina unogona kushandisa ruzivo rwawo rwese kugadzirisa matambudziko matsva; mumwe muenzaniso wehunyanzvi uhu muPaLM kugona kwayo kutsanangura joke raasina kumbonzwa.

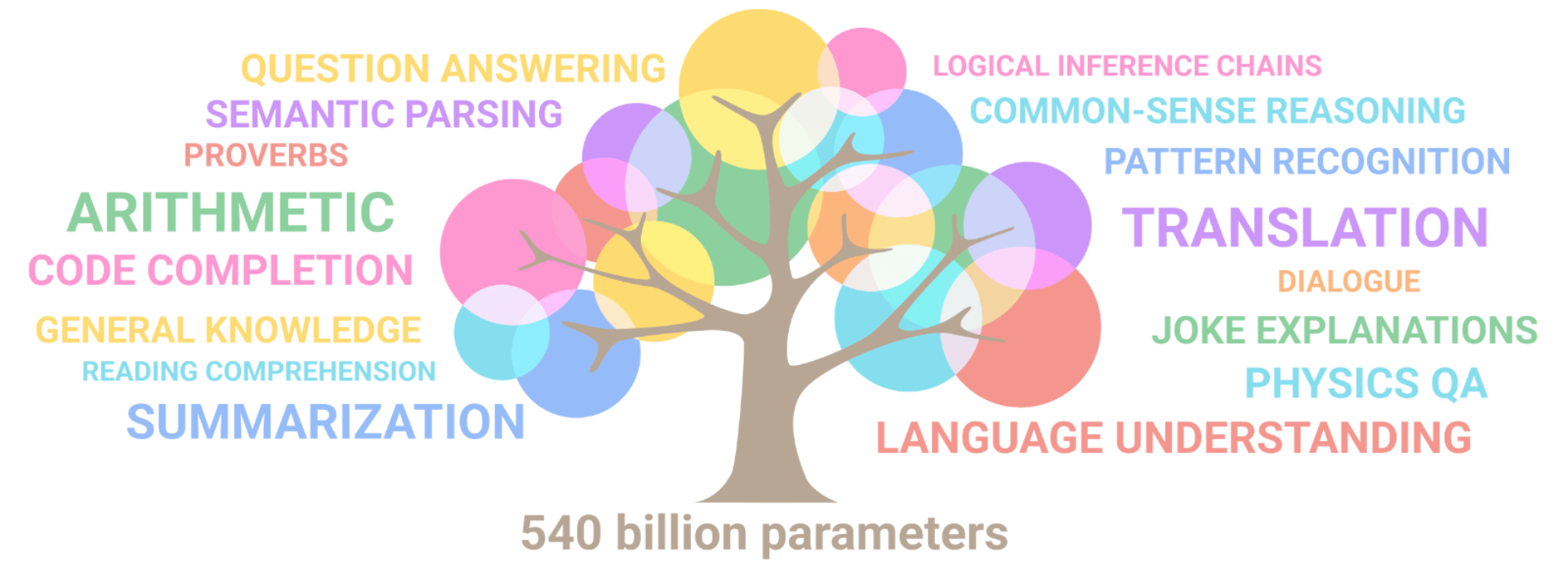

PaLM yakaratidza hunyanzvi hwebudiriro pamabasa akasiyana siyana akaomarara, anosanganisira kunzwisisa nekugadzira mutauro, zviitiko zvine chekuita nekodhi yemasvomhu akawanda, kufunga-pfungwa, kududzira, nezvimwe zvakawanda.

Yakaratidza kugona kwayo kugadzirisa nyaya dzakaoma uchishandisa mitauro yakawanda yeNLP seti. PaLM inogona kushandiswa nemusika wepasirese tekinoroji kusiyanisa chikonzero nemhedzisiro, pfungwa musanganiswa, mitambo yakasiyana, uye zvimwe zvinhu zvakawanda.

Inogona zvakare kuburitsa tsananguro dzakadzama dzemamiriro mazhinji uchishandisa multistep inonzwisisika inference, mutauro wakadzama, ruzivo rwepasi rose, uye humwe hunyanzvi.

Google yakagadzira sei iyo PaLM algorithm?

Nekuita kweGoogle kubudirira muPaLM, nzira dzakarongerwa kukwira kusvika ku540 bhiriyoni paramita. Iyo inozivikanwa seyo imwe modhi inokwanisa kuita nemazvo uye zvinobudirira generals munzvimbo dzakawanda. Pathways paGoogle yakatsaurirwa kuvandudza komputa yakagoverwa yeanomhanyisa.

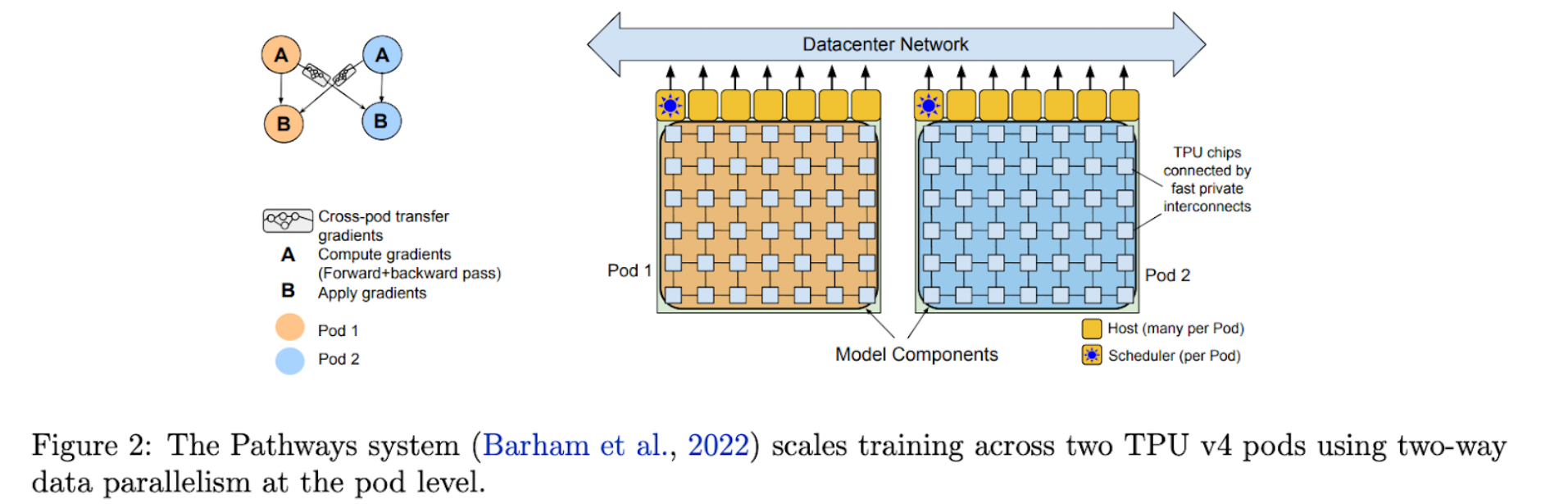

PaLM idecoder-chete transformer modhi iyo yakadzidziswa pachishandiswa Pathways system. PaLM yakabudirira kuwana mamiriro-ye-the-art mashoma-shot performance pane akati wandei mabasa, maererano neGoogle. PaLM yakashandisa iyo Pathways sisitimu yekuwedzera kudzidziswa kune yakakura TPU-based system kumisikidzwa, inozivikanwa se6144 machipi kekutanga.

Dhata rekudzidzisa remhando yeAI-mutauro rakagadzirwa nemusanganiswa weChirungu nemamwe madataset emitauro yakawanda. Nemazwi "asina kurasikirwa", ane zvemhando yepamusoro zvemukati zvewebhu, nhaurirano, mabhuku, GitHub kodhi, Wikipedia, uye zvimwe zvakawanda. Izwi risina kurasikirwa rinozivikanwa kuchengetedza chena uye kutyora Unicode mavara asiri muduramazwi kuita mabhayithi.

PaLM yakagadziridzwa neGoogle uye Pathways ichishandisa yakajairwa shanduko yemodhi yekuvaka uye decoder kumisikidzwa yaisanganisira SwiGLU Activation, parallel layer, RoPE embeddings, yakagovaniswa yekuisa-yekuburitsa embeddings, yakawanda-mubvunzo kutarisisa, uye pasina kurerekera kana mazwi. PaLM, kune rumwe rutivi, yakagadzirira kupa hwaro hwakasimba hweGoogle uye Pathways 'AI-mutauro modhi.

Parameters inoshandiswa kudzidzisa PaLM

Gore rapfuura, Google yakatanga Pathways, modhi imwe chete inogona kudzidziswa kuita zviuru, kana asiri mamirioni, ezvinhu-yakanzi "inotevera-chizvarwa AI architecture" sezvo ichigona kukunda mamodheru aripo ekudzidziswa kuita chinhu chimwe chete. . Panzvimbo pekuwedzera kugona kwemamodhi azvino, mhando nyowani dzinowanzo kuvakwa kubva pasi kusvika pakuita basa rimwe chete.

Nekuda kweizvozvo, vakagadzira makumi ezviuru emhando dzemakumi ezviuru zvezviitiko zvakasiyana. Iri ibasa rinotora nguva uye rekushandisa zvakanyanya.

Google yakaratidza kuburikidza nePathways kuti modhi imwe chete inogona kubata zvakasiyana-siyana zviitiko uye dhizaini nekubatanidza matarenda aripo kuti udzidze mabasa matsva nekukurumidza uye nemazvo.

Multimodal modhi dzinosanganisira kuona, nzwisiso yemitauro, uye manzwi ekugadzirisa zvese panguva imwe chete zvinogona kugoneswa kuburikidza nenzira. Pathways Mutauro Model (PaLM) inobvumira kudzidziswa kwemhando imwe chete kune akawanda TPU v4 Pods nekuda kweiyo 540 bhiriyoni parameter modhi.

PaLM, dense decoder-chete Transformer modhi, inokunda mamiriro-e-the-art mashoma-pfuti kuita mukati mehuwandu hwakasiyana hwemabasa. PaLM iri kudzidziswa pamapodhi maviri eTPU v4 ayo akabatana kuburikidza nedata center network (DCN).

Zvinotora mukana weese modhi uye data parallelism. Vatsvakurudzi vakashandisa 3072 TPU v4 processors mune imwe neimwe Pod yePaLM, iyo yakabatanidzwa kune 768 mauto. Sekureva kwevatsvagiri, iyi ndiyo hombe TPU kumisikidzwa isati yaburitswa, ichivabvumira kuyera kudzidziswa pasina kushandisa pombi parallelism.

Pipe lining inzira yekuunganidza mirairo kubva kuCPU kuburikidza nepombi mune zvakajairika. Iwo akaturikidzana eiyo modhi akakamurwa kuita nhanho dzinogona kugadziriswa mukuenderana kuburikidza nepombi modhi parallelism (kana pombi parallelism).

Iyo activation memory inotumirwa kune inotevera nhanho kana imwe nhanho inopedza kupfuudza kumberi kweiyo micro-batch. Iwo ma gradients anozotumirwa kumashure kana nhanho inotevera yapedza kuparadzira kwayo kumashure.

PaLM Breakthrough kugona

PaLM inoratidza kugona kwepasi-pasi mumhando dzakasiyana dzemabasa akaoma. Heino mienzaniso yakawanda:

1. Kusikwa kwemutauro uye kunzwisisa

PaLM yakaedzwa pamakumi maviri nemapfumbamwe emabasa eNLP muChirungu.

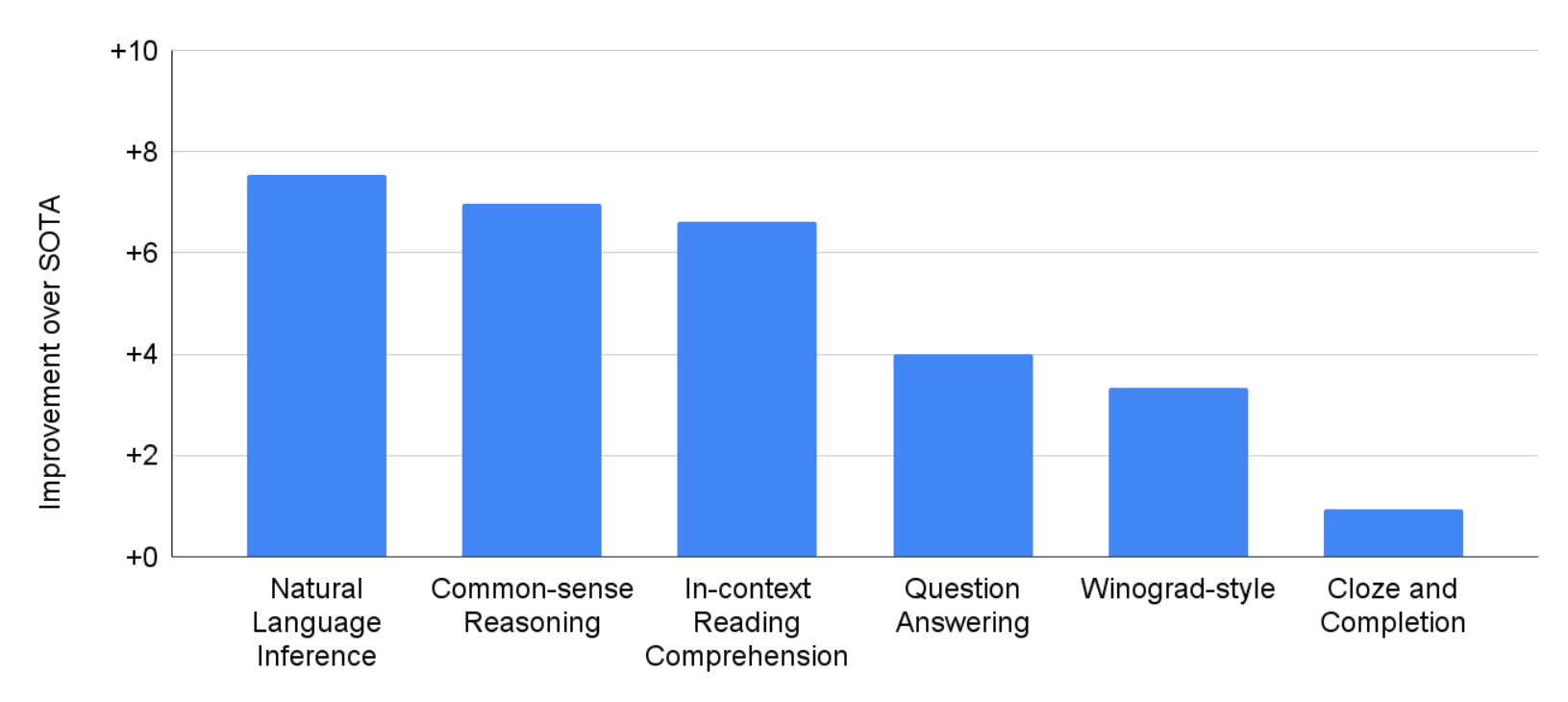

Pane zvishoma-pfuti, PaLM 540B yakakunda mhando dzakakura dzakapfuura dzakaita seGLaM, GPT-3, Megatron-Turing NLG, Gopher, Chinchilla, uye LaMDA pamakumi maviri nemasere e28 mabasa, kusanganisira rakavhurika-domain rakavharika-bhuku rakasiyana-siyana rekupindura mibvunzo. , cloze uye mutsara-kupedzisa mabasa, Winograd-style mabasa, in-context kuverenga kunzwisisa mabasa, commonsense kufunga mabasa, SuperGLUE mabasa, uye zvakasikwa inference.

Pane akati wandei BIG-bhenji mabasa, PaLM inoratidza yakanakisa kududzira mutauro wechisikigo uye hunyanzvi hwechizvarwa. Semuenzaniso, modhi inogona kusiyanisa pakati pechikonzero nemhedzisiro, kunzwisisa musanganiswa wepfungwa mune mamwe mamiriro, uye kunyange kufungidzira bhaisikopo kubva emoji. Kunyangwe 22% chete yekosi yekudzidziswa isiri yeChirungu, PaLM inoita zvakanaka pamitauro yakawanda yeNLP mabhenji, kusanganisira kududzira, kuwedzera kuChirungu NLP mabasa.

2. Kukurukurirana

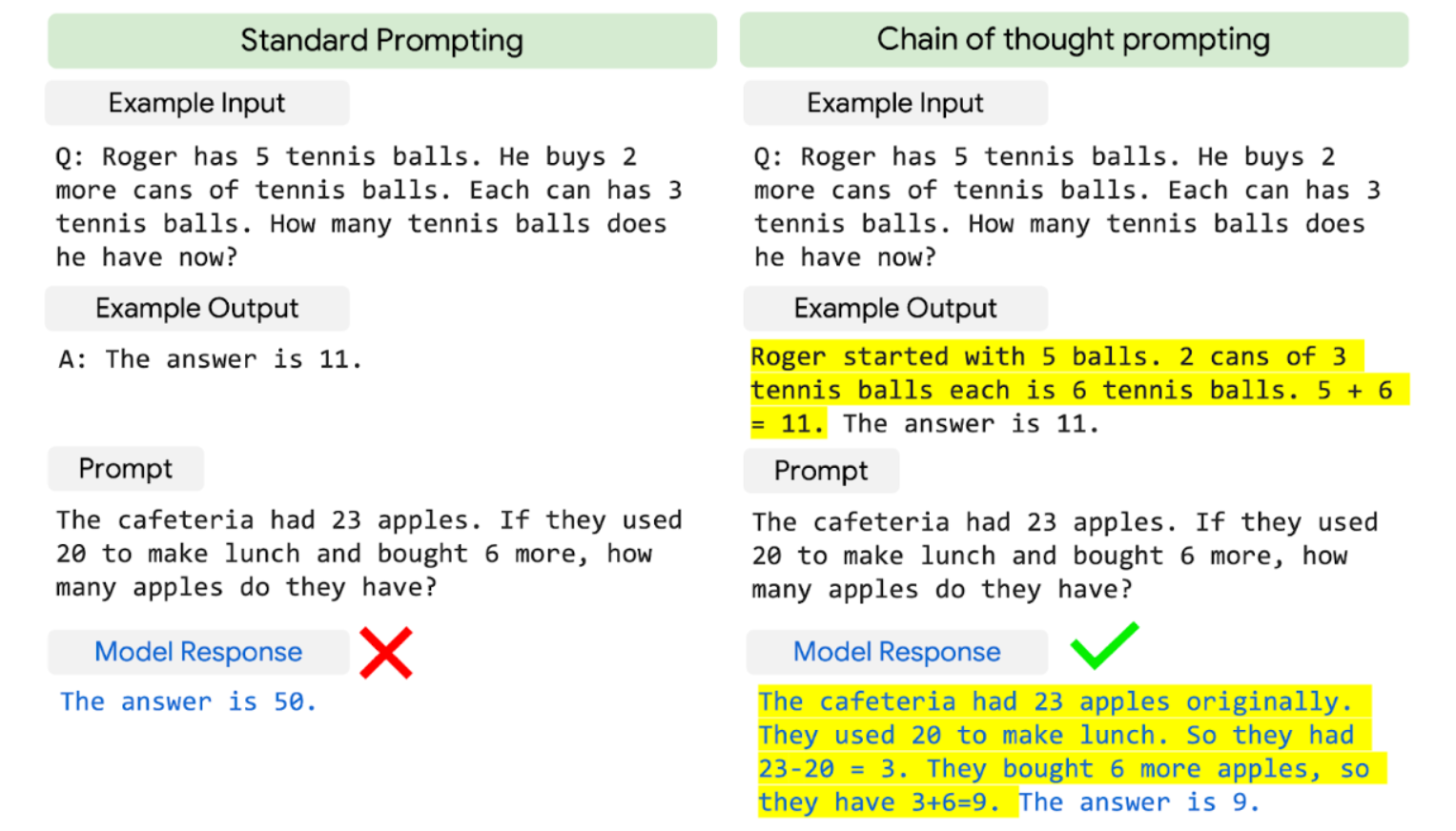

PaLM inosanganisa saizi yemodhi neketani-ye-yepfungwa inokurudzira kuratidza hunyanzvi hwekubudirira pazvinetso zvekufunga zvinoda multistep arithmetic kana commonsense kufunga.

Yakapfuura maLLM, akadai saGopher, akabatsirikana zvishoma kubva pakukura kwemuenzaniso maererano nekusimudzira mashandiro. Iyo PaLM 540B ine ketani-ye-yekufunga-ichikurudzira yakafamba zvakanaka pamatatu arithmetic uye maviri commonsense kufunga dataset.

PaLM inokunda chikamu chekare chepamusoro che55%, iyo yakawanikwa nekugadzirisa zvakanaka iyo GPT-3 175B modhi ine seti yekudzidzisa ye7500 matambudziko nekuisanganisa neyekunze karukureta uye verifier kugadzirisa 58 muzana yenyaya muGSM8K, a. bhenji rezviuru zvemibvunzo yakaoma yegiredhi rechikoro nhanho uchishandisa 8-pfuti kukurudzira.

Ichi chibodzwa chitsva chinonyanya kukosha sezvo chichisvika paavhareji ye60% yezvipingamupinyi zvinoonekwa nevane makore 9-12 ekuberekwa. Inogonawo kupindura kune majee ekutanga asingawanikwe painternet.

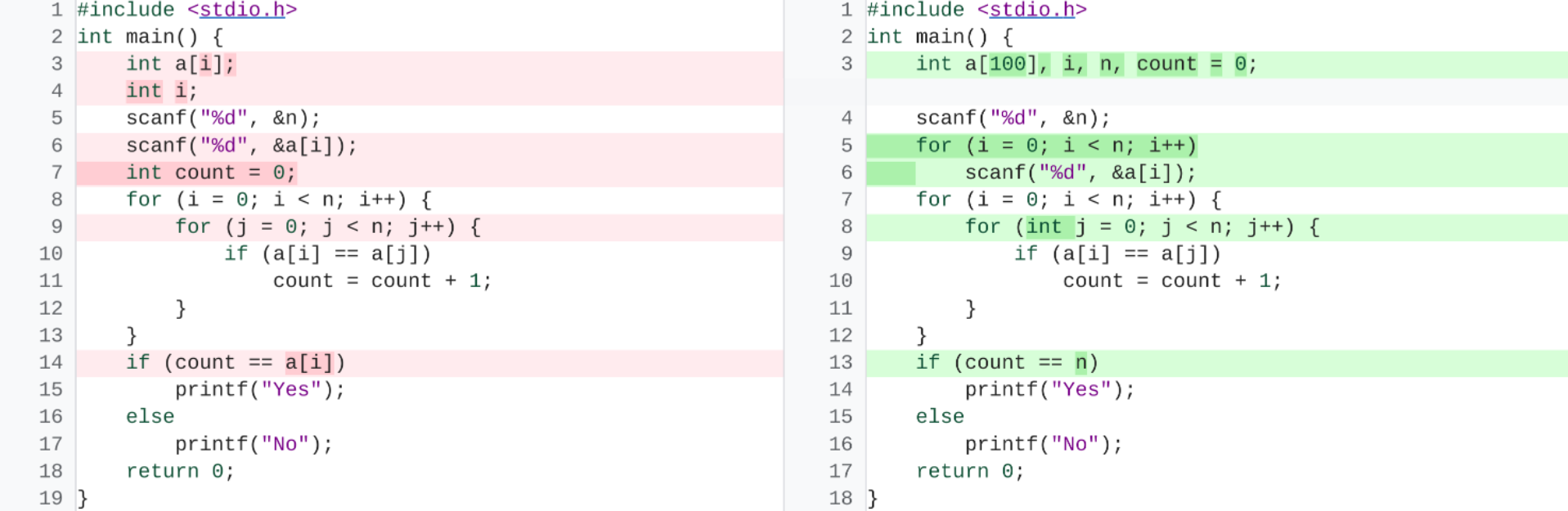

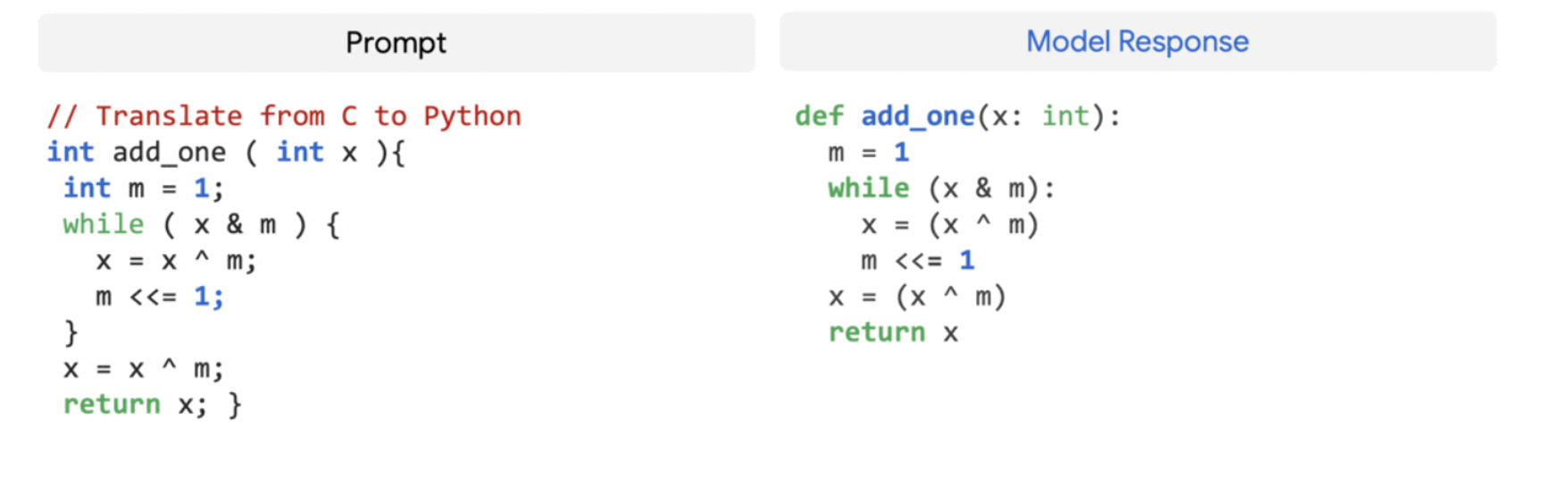

3. Code Generation

MaLLM akaratidzwawo kuita zvakanaka mumabasa ekukodha, kusanganisira kugadzira kodhi kubva kutsanangudzo yemutauro wechisikigo (zvinyorwa-kune-kodhi), kodhi yekushandura pakati pemitauro, uye kugadzirisa zvikanganiso zvekubatanidza. Pasinei nekungova ne5% kodhi mudhipatimendi rekutanga-kudzidziswa, PaLM 540B inoita zvakanaka pazvose zviri zviviri kukodha uye mabasa emutauro wechisikigo mune imwe modhi.

Kuita kwayo kushoma-pfuti kunoshamisa, sezvo ichienderana neCodex 12B yakagadziridzwa uchidzidzira ne50 times shoma Python kodhi. Izvi zvinodzosera kumashure nezvakawanikidzwa kuti mamodheru akakura anogona kuita sampuli zvakanaka pane madiki mamodheru nekuti anogona kunyatso kufambisa kudzidza kubva kune akawanda. kuronga mitauro uye ruzivo rwemutauro wakajeka.

mhedziso

PaLM inoratidza kugona kwePathways system kukwira kusvika kuzviuru zveaccelerator processors pamusoro pembiri TPU v4 Pods nekudzidzisa zvinobudirira 540-bhiriyoni parameter modhi ine yakanyatso dzidza, yakanyatsogadziriswa resipi yedense decoder-chete Transformer modhi.

Iyo inowana kubudirira kushoma-kupfura kuita pane dzakasiyana siyana yemutauro wechisikigo kugadzirisa, kufunga, uye coding matambudziko nekusundidzira miganhu yechikero chemuenzaniso.

Leave a Reply