Ma neural network akuluakulu omwe aphunzitsidwa kuzindikira chilankhulo komanso kubadwa awonetsa zotsatira zabwino kwambiri pantchito zosiyanasiyana m'zaka zaposachedwa. GPT-3 inatsimikizira kuti zinenero zazikulu (LLMs) zikhoza kugwiritsidwa ntchito pophunzira pang'ono ndi kupeza zotsatira zabwino popanda kufunikira deta yochuluka yokhudzana ndi ntchito kapena kusintha magawo.

Google, Silicon Valley tech behemoth, yabweretsa PaLM, kapena Pathways Language Model, kumakampani aukadaulo padziko lonse lapansi ngati mtundu wotsatira wa chilankhulo cha AI. Google yaphatikiza chatsopano nzeru zochita kupanga Zomangamanga mu PaLM ndi cholinga chokweza mtundu wa chilankhulo cha AI.

Mu positi iyi, tiwona algorithm ya Palm mwatsatanetsatane, kuphatikiza magawo omwe amagwiritsidwa ntchito poiphunzitsa, nkhani yomwe imathetsa, ndi zina zambiri.

Kodi Google's PaLM algorithm?

Pathways Language Model ndi chiyani PALM imayimira. Iyi ndi njira yatsopano yopangidwa ndi Google kuti ilimbikitse kamangidwe ka Pathways AI. Cholinga chachikulu cha dongosololi ndikuchita zinthu zosiyanasiyana miliyoni imodzi nthawi imodzi.

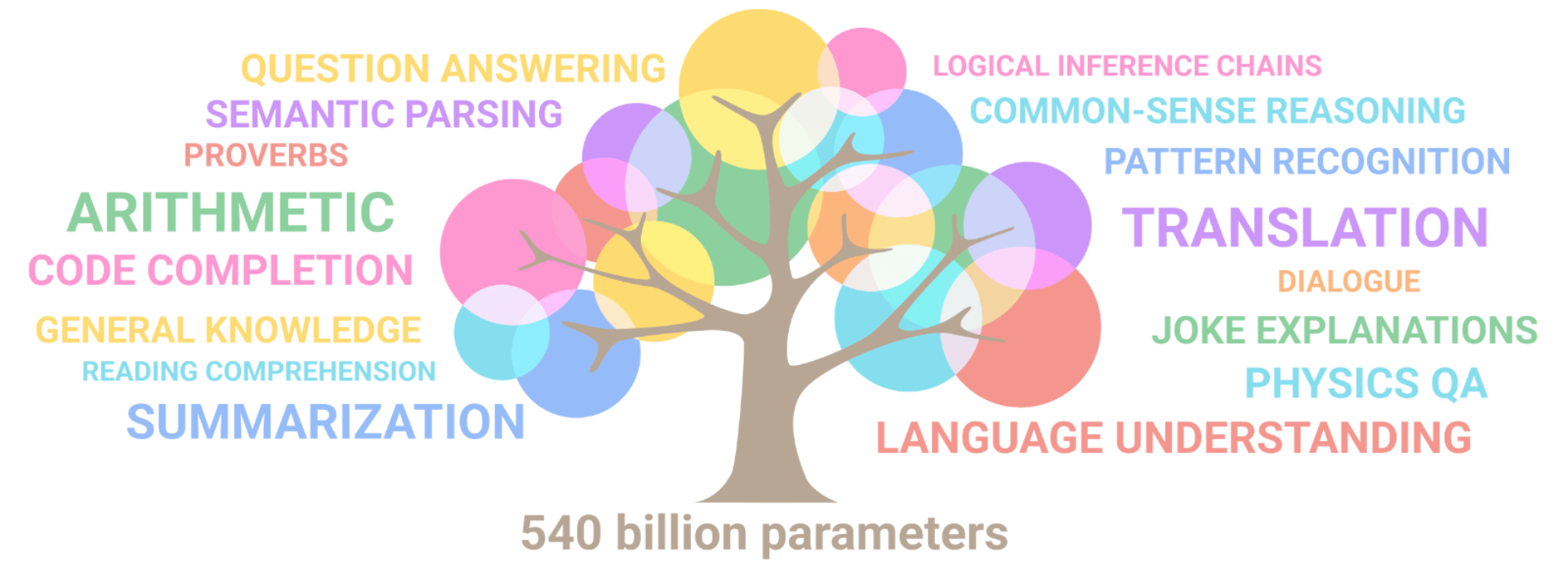

Izi zikuphatikiza chilichonse kuyambira pakuzindikira zinthu zovuta kufika pamalingaliro otsika. PaLM imatha kupitilira luso lamakono la AI komanso anthu m'zilankhulo ndi kulingalira.

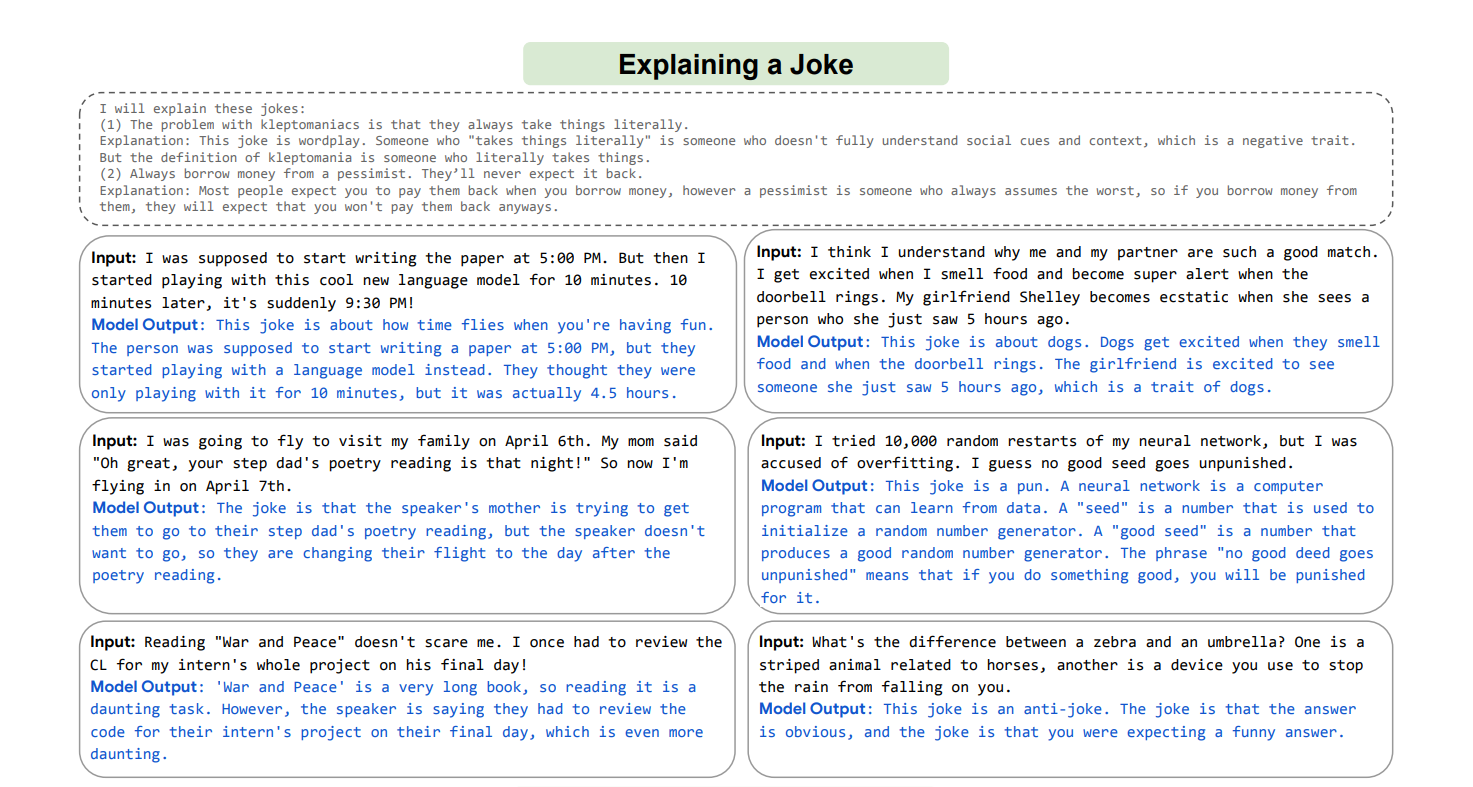

Izi zikuphatikiza Maphunziro Ochepa Ochepa, omwe amatsanzira momwe anthu amaphunzirira zinthu zatsopano ndikuphatikiza zidziwitso zosiyanasiyana kuti athe kuthana ndi zovuta zatsopano zomwe sizinawonekerepo, mothandizidwa ndi makina omwe angagwiritse ntchito chidziwitso chake chonse kuti athetse zovuta zatsopano; Chitsanzo chimodzi cha luso limeneli ku PaLM ndi luso lake lofotokozera nthabwala zomwe sizinamvepo.

PaLM inasonyeza luso lopambana pa ntchito zosiyanasiyana zovuta, kuphatikizapo kumvetsetsa chinenero ndi kupanga, zochita zambiri zokhudzana ndi masamu a masamu, kulingalira momveka bwino, kumasulira, ndi zina zambiri.

Yawonetsa kuthekera kwake kuthana ndi zovuta pogwiritsa ntchito zinenero zambiri za NLP. PaLM itha kugwiritsidwa ntchito ndi msika wapadziko lonse lapansi waukadaulo kusiyanitsa zomwe zimayambitsa ndi zotsatira zake, kuphatikiza kwamalingaliro, masewera apadera, ndi zina zambiri.

Ikhozanso kulongosola mozama pazochitika zambiri pogwiritsa ntchito njira zambiri zomveka, chinenero chozama, chidziwitso cha dziko lonse, ndi njira zina.

Kodi Google idapanga bwanji algorithm ya PaLM?

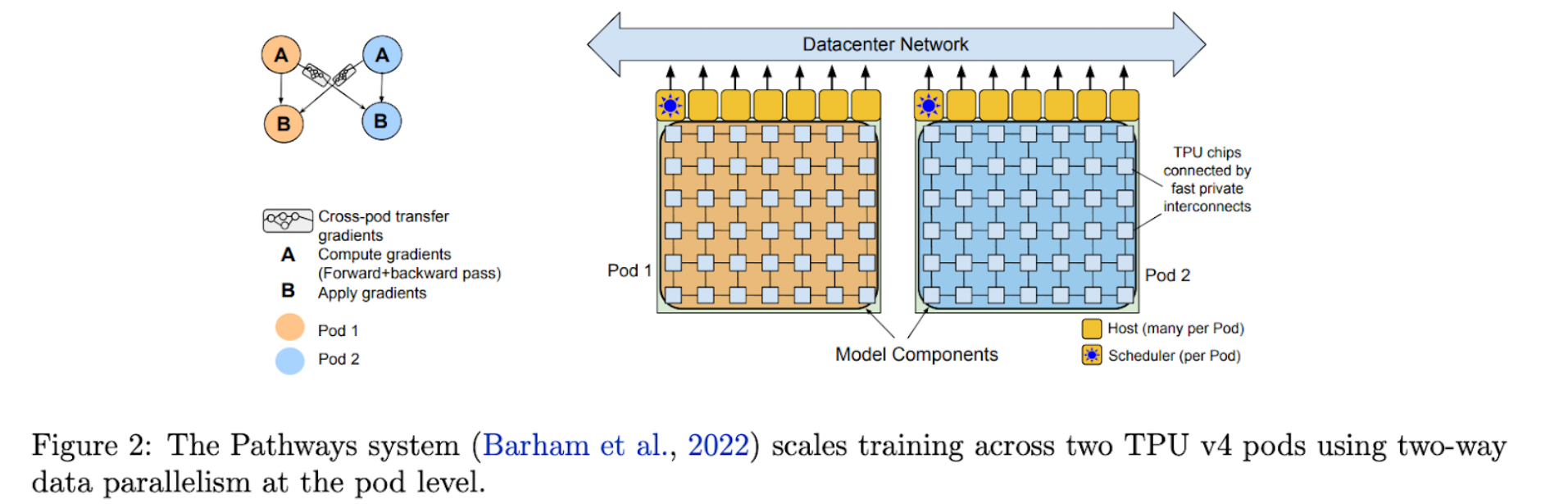

Pakuchita bwino kwa Google ku PaLM, njira zakonzedwa kuti zikwere mpaka 540 biliyoni magawo. Imazindikiridwa ngati chitsanzo chimodzi chomwe chimatha kukhazikika bwino m'magawo ambiri. Pathways ku Google idadzipereka kuti ipange makompyuta ogawidwa a ma accelerator.

PaLM ndi mtundu wosinthira wa decoder-okha womwe waphunzitsidwa pogwiritsa ntchito njira ya Pathways. PaLM yakwanitsa kuchita bwino kwambiri pazantchito zingapo, malinga ndi Google. PaLM yagwiritsa ntchito njira ya Pathways kukulitsa maphunziro ku kasinthidwe kake kochokera ku TPU, komwe kumadziwika kuti tchipisi 6144 kwa nthawi yoyamba.

Dongosolo lophunzitsira lachitsanzo cha chinenero cha AI limapangidwa ndi kusakaniza kwa Chingerezi ndi zolemba zina zazinenero zambiri. Ndi mawu "osataya", ili ndi masamba apamwamba kwambiri, zokambirana, mabuku, GitHub code, Wikipedia, ndi zina zambiri. Mawu osatayika amadziwika chifukwa chokhalabe oyera ndikuphwanya zilembo za Unicode zomwe sizipezeka m'mawu kukhala ma byte.

PaLM inapangidwa ndi Google ndi Pathways pogwiritsa ntchito kamangidwe kake ka transformer ndi kasinthidwe ka decoder komwe kumaphatikizapo SwiGLU Activation, zigawo zofanana, zoikamo za RoPE, zoyikapo zolowetsa-zotulutsa, chidwi cha mafunso ambiri, ndipo palibe kukondera kapena mawu. PaLM, kumbali ina, yakonzeka kupereka maziko olimba a Google ndi Pathways 'AI-language model.

Ma parameters omwe amagwiritsidwa ntchito pophunzitsa PaLM

Chaka chatha, Google idakhazikitsa Pathways, mtundu umodzi womwe ungaphunzitsidwe kuchita masauzande, kapena mamiliyoni, azinthu-wotchedwa "m'badwo wotsatira wa AI zomangamanga" chifukwa ukhoza kuthana ndi zolephera zomwe zilipo pophunzitsidwa kuchita chinthu chimodzi chokha. . M'malo mokulitsa luso la zitsanzo zamakono, zitsanzo zatsopano nthawi zambiri zimamangidwa kuchokera pansi kuti zikwaniritse ntchito imodzi.

Zotsatira zake, apanga masauzande masauzande amitundu yambiri pazinthu zosiyanasiyana. Iyi ndi ntchito yowononga nthawi komanso yogwiritsa ntchito zinthu zambiri.

Google idatsimikizira kudzera pa Pathways kuti mtundu umodzi ukhoza kugwira ntchito zosiyanasiyana ndikujambula ndikuphatikiza maluso aposachedwa kuti uphunzire ntchito zatsopano mwachangu komanso moyenera.

Mitundu yamitundu yosiyanasiyana yomwe imaphatikizapo masomphenya, kumvetsetsa kwa zilankhulo, ndi kukonza zomveka nthawi imodzi zitha kuthandizidwa kudzera m'njira. Pathways Language Model (PaLM) imalola kuphunzitsidwa kwa mtundu umodzi kudutsa ma TPU v4 Pods ambiri chifukwa cha mawonekedwe ake 540 biliyoni.

PaLM, chosinthira chotsitsa chotsitsa chotsitsa chokhacho, chimaposa magwiridwe antchito amakono pamitundu yambiri yantchito. PaLM ikuphunzitsidwa pa ma TPU v4 Pods awiri omwe amalumikizidwa kudzera pa data center network (DCN).

Zimatengera mwayi pamitundu yonse komanso kufanana kwa data. Ofufuzawo adagwiritsa ntchito mapurosesa a 3072 TPU v4 mu Pod iliyonse ya PaLM, yomwe idalumikizidwa ndi makamu a 768. Malinga ndi ofufuzawo, uku ndiye kusinthika kwakukulu kwa TPU komwe kwawululidwa, kuwalola kuti azitha maphunziro osagwiritsa ntchito mapaipi ofanana.

Kuyika mapaipi ndi njira yopezera malangizo kuchokera ku CPU kudzera papaipi wamba. Zigawo zachitsanzozo zimagawidwa m'magawo omwe angathe kusinthidwa mofanana kudzera mumtundu wa mapaipi (kapena kufanana kwa mapaipi).

Memory activation imatumizidwa ku sitepe yotsatira pamene gawo limodzi limaliza kupita patsogolo kwa micro-batch. Ma gradients amatumizidwa kumbuyo pamene gawo lotsatirali limaliza kufalitsa kwawo chakumbuyo.

Maluso a PaLM Breakthrough

PaLM imawonetsa luso lapamwamba pantchito zingapo zovuta. Nazi zitsanzo zingapo:

1. Kulengedwa kwa chinenero ndi kumvetsetsa

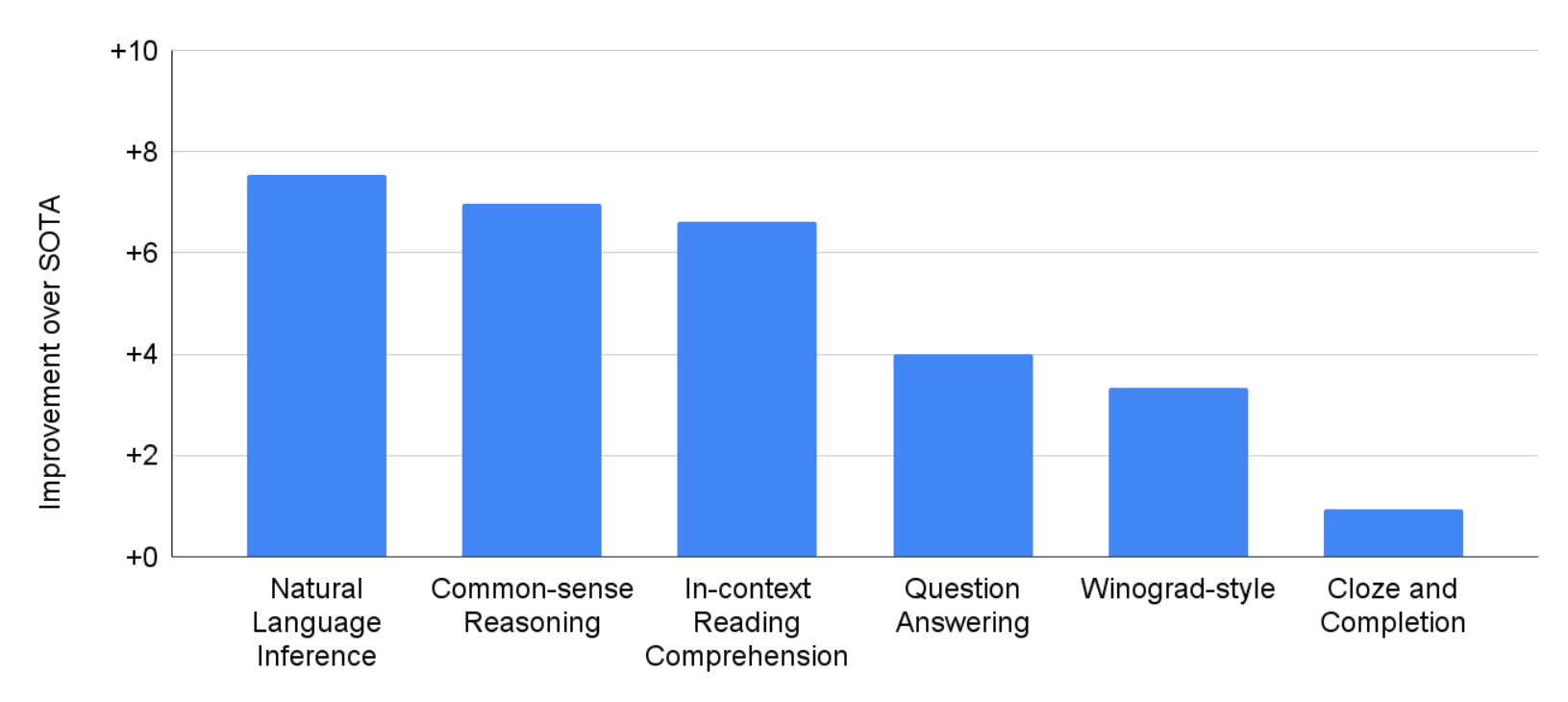

PaLM inayesedwa pa ntchito 29 zosiyanasiyana za NLP mu Chingerezi.

Pazifukwa zochepa, PaLM 540B idachita bwino kwambiri kuposa mitundu yayikulu yam'mbuyomu monga GLaM, GPT-3, Megatron-Turing NLG, Gopher, Chinchilla, ndi LaMDA pa 28 mwa ntchito 29, kuphatikiza ntchito zoyankha mafunso otseguka m'mabuku otsekedwa. , ntchito zotsekera ndi zomaliza ziganizo, ntchito za mtundu wa Winograd, ntchito zomvetsetsa zowerengera mkati, ntchito zoganiza momveka bwino, ntchito za SuperGLUE, ndi malingaliro achilengedwe.

Pantchito zingapo za BIG-bench, PaLM imawonetsa kutanthauzira kwabwino kwa chilankhulo chachilengedwe komanso luso la m'badwo. Mwachitsanzo, chitsanzochi chimatha kusiyanitsa pakati pa zomwe zimayambitsa ndi zotsatira zake, kumvetsetsa kuphatikizika kwamalingaliro nthawi zina, komanso kuyerekezera filimuyo ndi emoji. Ngakhale 22% yokha ya maphunziro omwe siachingerezi, PaLM imachita bwino pama benchmarks a zinenero zambiri za NLP, kuphatikiza kumasulira, kuwonjezera pa ntchito za Chingerezi za NLP.

2. Kukambitsirana

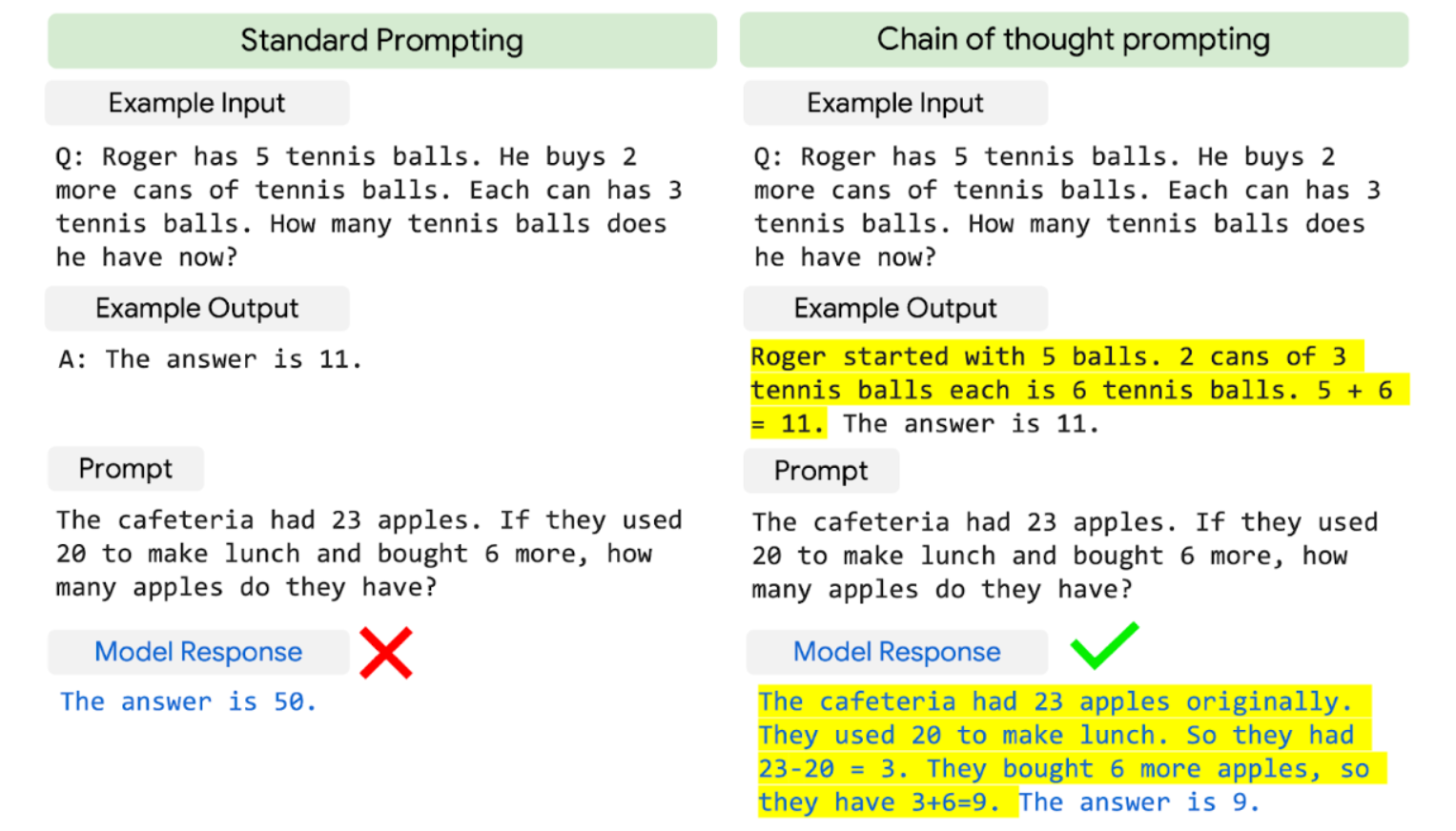

PaLM imaphatikiza kukula kwachitsanzo ndi unyolo wamalingaliro omwe amawapangitsa kuwonetsa maluso opambana pazovuta zokambitsirana zomwe zimafunikira masamu ambiri kapena kulingalira kwanzeru.

Ma LLM am'mbuyomu, monga Gopher, adapindula pang'ono ndi kukula kwachitsanzo ponena za kupititsa patsogolo ntchito. PaLM 540B yokhala ndi malingaliro ambiri idayenda bwino pamasamu atatu ndi ma dataset awiri oganiza bwino.

PaLM imaposa chiwerengero chapamwamba cha 55%, chomwe chinapezedwa mwa kukonza bwino chitsanzo cha GPT-3 175B ndi maphunziro a mavuto a 7500 ndikuphatikiza ndi chowerengera chakunja ndi verifier kuti athetse 58 peresenti ya nkhani mu GSM8K, a chizindikiro cha masamu masamu masauzande ambiri akusukulu pogwiritsa ntchito 8-shot prompting.

Zotsatira zatsopanozi ndizofunikira kwambiri chifukwa zimayandikira pafupifupi 60% ya zopinga zomwe azaka za 9-12 amakumana nazo. Ikhozanso kuyankha nthabwala zoyambirira zomwe sizipezeka pa intaneti.

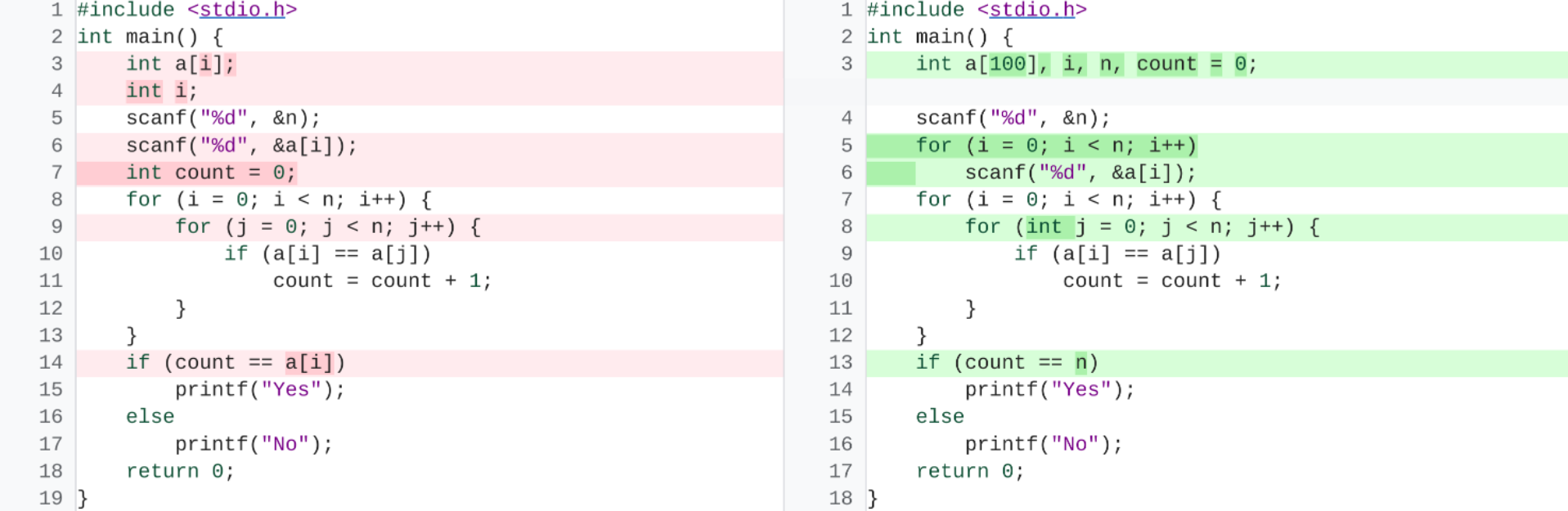

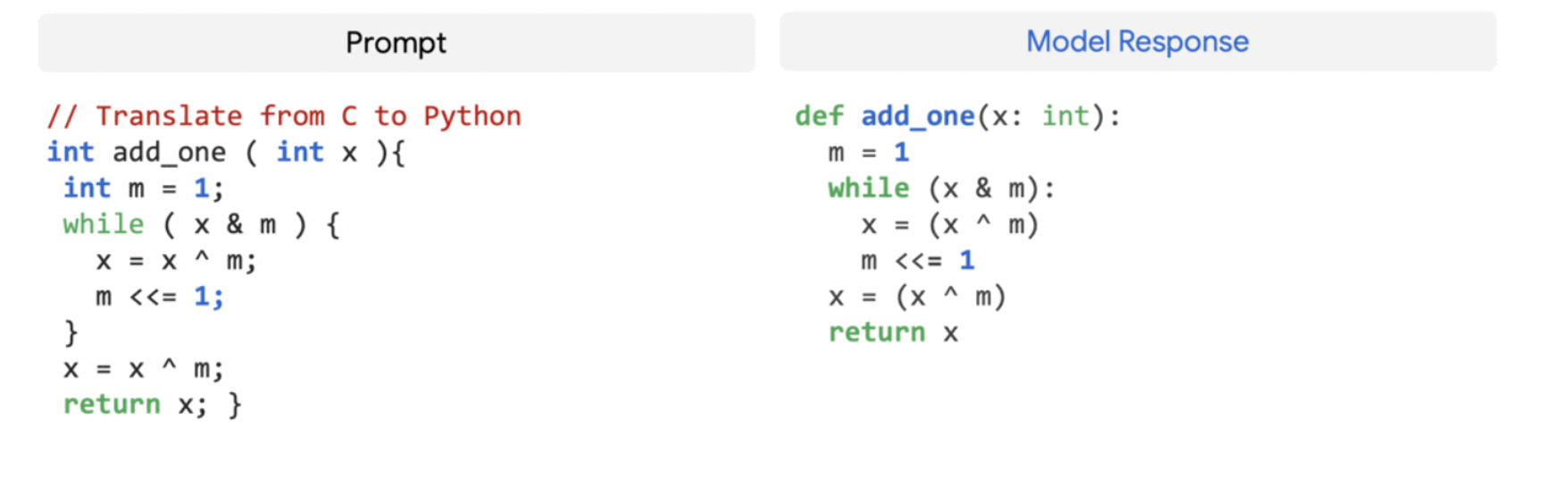

3. Kupanga Khodi

Ma LLM awonetsedwanso kuti akugwira bwino ntchito zolembera, kuphatikiza kupanga ma code kuchokera ku chilankhulo chachilengedwe (text-to-code), kumasulira kachidindo pakati pa zinenero, ndi kuthetsa zolakwika zophatikiza. Ngakhale ali ndi ma code 5% okha mu dataset yophunzitsira, PaLM 540B imagwira bwino ntchito zolembera komanso chilankhulo chachilengedwe mumtundu umodzi.

Kuchita kwake pang'onopang'ono ndikodabwitsa, chifukwa kumafanana ndi Codex 12B yokonzedwa bwino pamene akuphunzitsidwa ndi 50 nthawi zochepa Python code. Kupeza uku kumabwereranso ndi zomwe zapezedwa kale kuti mitundu yayikulu imatha kukhala yabwino kwambiri kuposa zitsanzo zing'onozing'ono chifukwa zimatha kusamutsa kuphunzira kuchokera kumitundu ingapo. zilankhulo zamakompyuta ndi zilankhulo zomveka bwino.

Kutsiliza

PaLM ikuwonetsa kuthekera kwa kachitidwe ka Pathways kofikira masauzande a ma accelerator processors pa ma TPU v4 Pods awiri pophunzitsa mogwira mtima mtundu wa 540-billion parameter wokhala ndi njira yophunzirira bwino, yokhazikika yachitsanzo chowundana cha Transformer yokhayo.

Imakwaniritsa magwiridwe antchito pang'onopang'ono pakusintha kwa chilankhulo chachilengedwe, kulingalira, ndi zovuta zolembera podutsa malire a sikelo yachitsanzo.

Siyani Mumakonda